GNU PSPP

This manual is for GNU PSPP version 2.0.1, software for statistical analysis.

Copyright © 1997, 1998, 2004, 2005, 2009, 2012, 2013, 2014, 2016, 2019, 2020, 2023 Free Software Foundation, Inc.

Permission is granted to copy, distribute and/or modify this document under the terms of the GNU Free Documentation License, Version 1.3 or any later version published by the Free Software Foundation; with no Invariant Sections, no Front-Cover Texts, and no Back-Cover Texts. A copy of the license is included in the section entitled "GNU Free Documentation License".

Table of Contents

- 1 Introduction

- 2 Your rights and obligations

- 3 Invoking

pspp - 4 Invoking

psppire - 5 Using PSPP

- 6 The PSPP language

- 6.1 Tokens

- 6.2 Forming commands of tokens

- 6.3 Syntax Variants

- 6.4 Types of Commands

- 6.5 Order of Commands

- 6.6 Handling missing observations

- 6.7 Datasets

- 6.8 Files Used by PSPP

- 6.9 File Handles

- 6.10 Backus-Naur Form

- 7 Mathematical Expressions

- 7.1 Boolean Values

- 7.2 Missing Values in Expressions

- 7.3 Grouping Operators

- 7.4 Arithmetic Operators

- 7.5 Logical Operators

- 7.6 Relational Operators

- 7.7 Functions

- 7.7.1 Mathematical Functions

- 7.7.2 Miscellaneous Mathematical Functions

- 7.7.3 Trigonometric Functions

- 7.7.4 Missing-Value Functions

- 7.7.5 Set-Membership Functions

- 7.7.6 Statistical Functions

- 7.7.7 String Functions

- 7.7.8 Time & Date Functions

- 7.7.9 Miscellaneous Functions

- 7.7.10 Statistical Distribution Functions

- 7.8 Operator Precedence

- 8 Data Input and Output

- 9 System and Portable File I/O

- 10 Combining Data Files

- 11 Manipulating Variables

- 11.1 DISPLAY

- 11.2 NUMERIC

- 11.3 STRING

- 11.4 RENAME VARIABLES

- 11.5 SORT VARIABLES

- 11.6 DELETE VARIABLES

- 11.7 VARIABLE LABELS

- 11.8 PRINT FORMATS

- 11.9 WRITE FORMATS

- 11.10 FORMATS

- 11.11 VALUE LABELS

- 11.12 ADD VALUE LABELS

- 11.13 MISSING VALUES

- 11.14 VARIABLE ATTRIBUTE

- 11.15 VARIABLE ALIGNMENT

- 11.16 VARIABLE WIDTH

- 11.17 VARIABLE LEVEL

- 11.18 VARIABLE ROLE

- 11.19 VECTOR

- 11.20 MRSETS

- 11.21 LEAVE

- 12 Data transformations

- 13 Selecting data for analysis

- 14 Conditional and Looping Constructs

- 14.1 BREAK

- 14.2 DEFINE

- 14.3 DO IF

- 14.4 DO REPEAT

- 14.5 LOOP

- 15 Statistics

- 15.1 DESCRIPTIVES

- 15.2 FREQUENCIES

- 15.3 EXAMINE

- 15.4 GRAPH

- 15.5 CORRELATIONS

- 15.6 CROSSTABS

- 15.7 CTABLES

- 15.7.1 Basics

- 15.7.2 Data Summarization

- 15.7.3 Statistics Positions and Labels

- 15.7.4 Category Label Positions

- 15.7.5 Per-Variable Category Options

- 15.7.6 Titles

- 15.7.7 Table Formatting

- 15.7.8 Display of Variable Labels

- 15.7.9 Missing Value Treatment

- 15.7.10 Computed Categories

- 15.7.11 Effective Weight

- 15.7.12 Hiding Small Counts

- 15.8 FACTOR

- 15.9 GLM

- 15.10 LOGISTIC REGRESSION

- 15.11 MEANS

- 15.12 NPAR TESTS

- 15.12.1 Binomial test

- 15.12.2 Chi-square Test

- 15.12.3 Cochran Q Test

- 15.12.4 Friedman Test

- 15.12.5 Kendall’s W Test

- 15.12.6 Kolmogorov-Smirnov Test

- 15.12.7 Kruskal-Wallis Test

- 15.12.8 Mann-Whitney U Test

- 15.12.9 McNemar Test

- 15.12.10 Median Test

- 15.12.11 Runs Test

- 15.12.12 Sign Test

- 15.12.13 Wilcoxon Matched Pairs Signed Ranks Test

- 15.13 T-TEST

- 15.14 ONEWAY

- 15.15 QUICK CLUSTER

- 15.16 RANK

- 15.17 REGRESSION

- 15.18 RELIABILITY

- 15.19 ROC

- 16 Matrices

- 16.1 Matrix Files

- 16.2 MATRIX DATA

- 16.3 MCONVERT

- 16.4 MATRIX

- 16.4.1 Matrix Expressions

- 16.4.2 Matrix Functions

- 16.4.2.1 Elementwise Functions

- 16.4.2.2 Logical Functions

- 16.4.2.3 Matrix Construction Functions

- 16.4.2.4 Minimum, Maximum, and Sum Functions

- 16.4.2.5 Matrix Property Functions

- 16.4.2.6 Matrix Rank Ordering Functions

- 16.4.2.7 Matrix Algebra Functions

- 16.4.2.8 Matrix Statistical Distribution Functions

- 16.4.2.9 EOF Function

- 16.4.3 The

COMPUTECommand - 16.4.4 The

CALLCommand - 16.4.5 The

PRINTCommand - 16.4.6 The

DO IFCommand - 16.4.7 The

LOOPandBREAKCommands - 16.4.8 The

READandWRITECommands - 16.4.9 The

GETCommand - 16.4.10 The

SAVECommand - 16.4.11 The

MGETCommand - 16.4.12 The

MSAVECommand - 16.4.13 The

DISPLAYCommand - 16.4.14 The

RELEASECommand

- 17 Utilities

- 17.1 ADD DOCUMENT

- 17.2 CACHE

- 17.3 CD

- 17.4 COMMENT

- 17.5 DOCUMENT

- 17.6 DISPLAY DOCUMENTS

- 17.7 DISPLAY FILE LABEL

- 17.8 DROP DOCUMENTS

- 17.9 ECHO

- 17.10 ERASE

- 17.11 EXECUTE

- 17.12 FILE LABEL

- 17.13 FINISH

- 17.14 HOST

- 17.15 INCLUDE

- 17.16 INSERT

- 17.17 OUTPUT

- 17.18 PERMISSIONS

- 17.19 PRESERVE and RESTORE

- 17.20 SET

- 17.21 SHOW

- 17.22 SUBTITLE

- 17.23 TITLE

- 18 Invoking

pspp-convert - 19 Invoking

pspp-output - 20 Invoking

pspp-dump-sav - 21 Not Implemented

- 22 Bugs

- 23 Function Index

- 24 Command Index

- 25 Concept Index

- Appendix A GNU Free Documentation License

1 Introduction

PSPP is a tool for statistical analysis of sampled data. It reads the data, analyzes the data according to commands provided, and writes the results to a listing file, to the standard output or to a window of the graphical display.

The language accepted by PSPP is similar to those accepted by SPSS statistical products. The details of PSPP’s language are given later in this manual.

PSPP produces tables and charts as output, which it can produce in several formats; currently, ASCII, PostScript, PDF, HTML, DocBook and TeX are supported.

The current version of PSPP, 2.0.1, is incomplete in terms of its statistical procedure support. PSPP is a work in progress. The authors hope to fully support all features in the products that PSPP replaces, eventually. The authors welcome questions, comments, donations, and code submissions. See Submitting Bug Reports, for instructions on contacting the authors.

2 Your rights and obligations

PSPP is not in the public domain. It is copyrighted and there are restrictions on its distribution, but these restrictions are designed to permit everything that a good cooperating citizen would want to do. What is not allowed is to try to prevent others from further sharing any version of this program that they might get from you.

Specifically, we want to make sure that you have the right to give away copies of PSPP, that you receive source code or else can get it if you want it, that you can change these programs or use pieces of them in new free programs, and that you know you can do these things.

To make sure that everyone has such rights, we have to forbid you to deprive anyone else of these rights. For example, if you distribute copies of PSPP, you must give the recipients all the rights that you have. You must make sure that they, too, receive or can get the source code. And you must tell them their rights.

Also, for our own protection, we must make certain that everyone finds out that there is no warranty for PSPP. If these programs are modified by someone else and passed on, we want their recipients to know that what they have is not what we distributed, so that any problems introduced by others will not reflect on our reputation.

Finally, any free program is threatened constantly by software patents. We wish to avoid the danger that redistributors of a free program will individually obtain patent licenses, in effect making the program proprietary. To prevent this, we have made it clear that any patent must be licensed for everyone’s free use or not licensed at all.

The precise conditions of the license for PSPP are found in the GNU General Public License. You should have received a copy of the GNU General Public License along with this program; if not, write to the Free Software Foundation, Inc., 51 Franklin Street, Fifth Floor, Boston, MA 02110-1301 USA. This manual specifically is covered by the GNU Free Documentation License (see GNU Free Documentation License).

3 Invoking pspp

PSPP has two separate user interfaces. This chapter describes

pspp, PSPP’s command-line driven text-based user interface.

The following chapter briefly describes PSPPIRE, the graphical user

interface to PSPP.

The sections below describe the pspp program’s command-line

interface.

- Main Options

- PDF, PostScript, SVG, and PNG Output Options

- Plain Text Output Options

- SPV Output Options

- TeX Output Options

- HTML Output Options

- OpenDocument Output Options

- Comma-Separated Value Output Options

3.1 Main Options

Here is a summary of all the options, grouped by type, followed by explanations in the same order.

In the table, arguments to long options also apply to any corresponding short options.

- Non-option arguments

syntax-file

- Output options

-o, --output=output-file -O option=value -O format=format -O device={terminal|listing} --no-output --table-look=file -e, --error-file=error-file- Language options

-I, --include=dir -I-, --no-include -b, --batch -i, --interactive -r, --no-statrc -a, --algorithm={compatible|enhanced} -x, --syntax={compatible|enhanced} --syntax-encoding=encoding- Informational options

-h, --help -V, --version

- Other options

-s, --safer --testing-mode

- syntax-file

Read and execute the named syntax file. If no syntax files are specified, PSPP prompts for commands. If any syntax files are specified, PSPP by default exits after it runs them, but you may make it prompt for commands by specifying ‘-’ as an additional syntax file.

- -o output-file

Write output to output-file. PSPP has several different output drivers that support output in various formats (use --help to list the available formats). Specify this option more than once to produce multiple output files, presumably in different formats.

Use ‘-’ as output-file to write output to standard output.

If no -o option is used, then PSPP writes text and CSV output to standard output and other kinds of output to whose name is based on the format, e.g. pspp.pdf for PDF output.

- -O option=value

Sets an option for the output file configured by a preceding -o. Most options are specific to particular output formats. A few options that apply generically are listed below.

- -O format=format

PSPP uses the extension of the file name given on -o to select an output format. Use this option to override this choice by specifying an alternate format, e.g. -o pspp.out -O format=html to write HTML to a file named pspp.out. Use --help to list the available formats.

- -O device={terminal|listing}

Sets whether PSPP considers the output device configured by the preceding -o to be a terminal or a listing device. This affects what output will be sent to the device, as configured by the SET command’s output routing subcommands (see SET). By default, output written to standard output is considered a terminal device and other output is considered a listing device.

- --no-output

Disables output entirely, if neither -o nor -O is also used. If one of those options is used, --no-output has no effect.

- --table-look=file

Reads a table style from file and applies it to all PSPP table output. The file should be a TableLook .stt or .tlo file. PSPP searches for file in the current directory, then in .pspp/looks in the user’s home directory, then in a looks subdirectory inside PSPP’s data directory (usually /usr/local/share/pspp). If PSPP cannot find file under the given name, it also tries adding a .stt extension.

When this option is not specified, PSPP looks for default.stt using the algorithm above, and otherwise it falls back to a default built-in style.

Using

SET TLOOKin PSPP syntax overrides the style set on the command line (see SET).- -e error-file

- --error-file=error-file

Configures a file to receive PSPP error, warning, and note messages in plain text format. Use ‘-’ as error-file to write messages to standard output. The default error file is standard output in the absence of these options, but this is suppressed if an output device writes to standard output (or another terminal), to avoid printing every message twice. Use ‘none’ as error-file to explicitly suppress the default.

- -I dir

- --include=dir

Appends dir to the set of directories searched by the

INCLUDE(see INCLUDE) andINSERT(see INSERT) commands.- -I-

- --no-include

Clears all directories from the include path, including directories inserted in the include path by default. The default include path is . (the current directory), followed by .pspp in the user’s home directory, followed by PSPP’s system configuration directory (usually /etc/pspp or /usr/local/etc/pspp).

- -b

- --batch

- -i

- --interactive

These options forces syntax files to be interpreted in batch mode or interactive mode, respectively, rather than the default “auto” mode. See Syntax Variants, for a description of the differences.

- -r

- --no-statrc

By default, at startup PSPP searches for a file named rc in the include path (described above) and, if it finds one, runs the commands in it. This option disables this behavior.

- -a {enhanced|compatible}

- --algorithm={enhanced|compatible}

With

enhanced, the default, PSPP uses the best implemented algorithms for statistical procedures. Withcompatible, however, PSPP will in some cases use inferior algorithms to produce the same results as the proprietary program SPSS.Some commands have subcommands that override this setting on a per command basis.

- -x {enhanced|compatible}

- --syntax={enhanced|compatible}

With

enhanced, the default, PSPP accepts its own extensions beyond those compatible with the proprietary program SPSS. Withcompatible, PSPP rejects syntax that uses these extensions.- --syntax-encoding=encoding

Specifies encoding as the encoding for syntax files named on the command line. The encoding also becomes the default encoding for other syntax files read during the PSPP session by the

INCLUDEandINSERTcommands. See INSERT, for the accepted forms of encoding.- --help

Prints a message describing PSPP command-line syntax and the available device formats, then exits.

- -V

- --version

Prints a brief message listing PSPP’s version, warranties you don’t have, copying conditions and copyright, and e-mail address for bug reports, then exits.

- -s

- --safer

Disables certain unsafe operations. This includes the

ERASEandHOSTcommands, as well as use of pipes as input and output files.- --testing-mode

Invoke heuristics to assist with testing PSPP. For use by

make checkand similar scripts.

3.2 PDF, PostScript, SVG, and PNG Output Options

To produce output in PDF, PostScript, SVG, or PNG format, specify -o file on the PSPP command line, optionally followed by any of the options shown in the table below to customize the output format.

PDF, PostScript, and SVG use real units: each dimension among the options listed below may have a suffix ‘mm’ for millimeters, ‘in’ for inches, or ‘pt’ for points. Lacking a suffix, numbers below 50 are assumed to be in inches and those above 50 are assumed to be in millimeters.

PNG files are pixel-based, so dimensions in PNG output must ultimately be measured in pixels. For output to these files, PSPP translates the specified dimensions to pixels at 72 pixels per inch. For PNG output only, fonts are by default rendered larger than this, at 96 pixels per inch.

An SVG or PNG file can only hold a single page. When PSPP outputs

more than one page to SVG or PNG, it creates multiple files. It

outputs the second page to a file named with a -2 suffix, the

third with a -3 suffix, and so on.

- -O format={pdf|ps|svg|png}

Specify the output format. This is only necessary if the file name given on -o does not end in .pdf, .ps, .svg, or .png.

- -O paper-size=paper-size

Paper size, as a name (e.g.

a4,letter) or measurements (e.g.210x297,8.5x11in).The default paper size is taken from the

PAPERSIZEenvironment variable or the file indicated by thePAPERCONFenvironment variable, if either variable is set. If not, and your system supports theLC_PAPERlocale category, then the default paper size is taken from the locale. Otherwise, if /etc/papersize exists, the default paper size is read from it. As a last resort, A4 paper is assumed.- -O foreground-color=color

Sets color as the default color for lines and text. Use a CSS color format (e.g.

#rrggbb) or name (e.g.black) as color.- -O orientation=orientation

Either

portraitorlandscape. Default:portrait.- -O left-margin=dimension

- -O right-margin=dimension

- -O top-margin=dimension

- -O bottom-margin=dimension

Sets the margins around the page. See below for the allowed forms of dimension. Default:

0.5in.- -O object-spacing=dimension

Sets the amount of vertical space between objects (such as headings or tables).

- -O prop-font=font-name

Sets the default font used for ordinary text. Most systems support CSS-like font names such as “Sans Serif”, but a wide range of system-specific fonts are likely to be supported as well.

Default: proportional font

Sans Serif.- -O font-size=font-size

Sets the size of the default fonts, in thousandths of a point. Default: 10000 (10 point).

- -O trim=true

This option makes PSPP trim empty space around each page of output, before adding the margins. This can make the output easier to include in other documents.

- -O outline=boolean

For PDF output only, this option controls whether PSPP includes an outline in the output file. PDF viewers usually display the outline as a side bar that allows for easy navigation of the file. The default is true unless -O trim=true is also specified. (The Cairo graphics library that PSPP uses to produce PDF output has a bug that can cause a crash when outlines and trimming are used together.)

- -O font-resolution=dpi

Sets the resolution for font rendering, in dots per inch. For PDF, PostScript, and SVG output, the default is 72 dpi, so that a 10-point font is rendered with a height of 10 points. For PNG output, the default is 96 dpi, so that a 10-point font is rendered with a height of 10 / 72 * 96 = 13.3 pixels. Use a larger dpi to enlarge text output, or a smaller dpi to shrink it.

3.3 Plain Text Output Options

PSPP can produce plain text output, drawing boxes using ASCII or Unicode line drawing characters. To produce plain text output, specify -o file on the PSPP command line, optionally followed by options from the table below to customize the output format.

Plain text output is encoded in UTF-8.

- -O format=txt

Specify the output format. This is only necessary if the file name given on -o does not end in .txt or .list.

- -O charts={template.png|none}

Name for chart files included in output. The value should be a file name that includes a single ‘#’ and ends in png. When a chart is output, the ‘#’ is replaced by the chart number. The default is the file name specified on -o with the extension stripped off and replaced by -#.png.

Specify

noneto disable chart output.- -O foreground-color=color

- -O background-color=color

Sets color as the color to be used for the background or foreground to be used for charts. Color should be given in the format

#RRRRGGGGBBBB, where RRRR, GGGG and BBBB are 4 character hexadecimal representations of the red, green and blue components respectively. If charts are disabled, this option has no effect.- -O width=columns

Width of a page, in columns. If unspecified or given as

auto, the default is the width of the terminal, for interactive output, or the WIDTH setting (see SET), for output to a file.- -O box={ascii|unicode}

Sets the characters used for lines in tables. If set to

ascii, output uses use the characters ‘-’, ‘|’, and ‘+’ for single-width lines and ‘=’ and ‘#’ for double-width lines. If set tounicodethen, output uses Unicode box drawing characters. The default isunicodeif the locale’s character encoding is "UTF-8" orasciiotherwise.- -O emphasis={none|bold|underline}

How to emphasize text. Bold and underline emphasis are achieved with overstriking, which may not be supported by all the software to which you might pass the output. Default:

none.

3.4 SPV Output Options

SPSS 16 and later write .spv files to represent the contents of its output editor. To produce output in .spv format, specify -o file on the PSPP command line, optionally followed by any of the options shown in the table below to customize the output format.

- -O format=spv

Specify the output format. This is only necessary if the file name given on -o does not end in .spv.

- -O paper-size=paper-size

- -O left-margin=dimension

- -O right-margin=dimension

- -O top-margin=dimension

- -O bottom-margin=dimension

- -O object-spacing=dimension

These have the same syntax and meaning as for PDF output. See PDF, PostScript, SVG, and PNG Output Options, for details.

3.5 TeX Output Options

If you want to publish statistical results in professional or academic journals, you will probably want to provide results in TeX format. To do this, specify -o file on the PSPP command line where file is a file name ending in .tex, or you can specify -O format=tex.

The resulting file can be directly processed using TeX or you can manually edit the file to add commentary text. Alternatively, you can cut and paste desired sections to another TeX file.

3.6 HTML Output Options

To produce output in HTML format, specify -o file on the PSPP command line, optionally followed by any of the options shown in the table below to customize the output format.

- -O format=html

Specify the output format. This is only necessary if the file name given on -o does not end in .html.

- -O charts={template.png|none}

Sets the name used for chart files. See Plain Text Output Options, for details.

- -O borders=boolean

Decorate the tables with borders. If set to false, the tables produced will have no borders. The default value is true.

- -O bare=boolean

The HTML output driver ordinarily outputs a complete HTML document. If set to true, the driver instead outputs only what would normally be the contents of the

bodyelement. The default value is false.- -O css=boolean

Use cascading style sheets. Cascading style sheets give an improved appearance and can be used to produce pages which fit a certain web site’s style. The default value is true.

3.7 OpenDocument Output Options

To produce output as an OpenDocument text (ODT) document, specify -o file on the PSPP command line. If file does not end in .odt, you must also specify -O format=odt.

ODT support is only available if your installation of PSPP was compiled with the libxml2 library.

The OpenDocument output format does not have any configurable options.

3.8 Comma-Separated Value Output Options

To produce output in comma-separated value (CSV) format, specify -o file on the PSPP command line, optionally followed by any of the options shown in the table below to customize the output format.

- -O format=csv

Specify the output format. This is only necessary if the file name given on -o does not end in .csv.

- -O separator=field-separator

Sets the character used to separate fields. Default: a comma (‘,’).

- -O quote=qualifier

Sets qualifier as the character used to quote fields that contain white space, the separator (or any of the characters in the separator, if it contains more than one character), or the quote character itself. If qualifier is longer than one character, only the first character is used; if qualifier is the empty string, then fields are never quoted.

- -O titles=boolean

Whether table titles (brief descriptions) should be printed. Default:

on.- -O captions=boolean

Whether table captions (more extensive descriptions) should be printed. Default: on.

The CSV format used is an extension to that specified in RFC 4180:

- Tables

Each table row is output on a separate line, and each column is output as a field. The contents of a cell that spans multiple rows or columns is output only for the top-left row and column; the rest are output as empty fields.

- Titles

When a table has a title and titles are enabled, the title is output just above the table as a single field prefixed by ‘Table:’.

- Captions

When a table has a caption and captions are enabled, the caption is output just below the table as a single field prefixed by ‘Caption:’.

- Footnotes

Within a table, footnote markers are output as bracketed letters following the cell’s contents, e.g. ‘[a]’, ‘[b]’, ... The footnotes themselves are output following the body of the table, as a separate two-column table introduced with a line that says ‘Footnotes:’. Each row in the table represent one footnote: the first column is the marker, the second column is the text.

- Text

Text in output is printed as a field on a line by itself. The TITLE and SUBTITLE produce similar output, prefixed by ‘Title:’ or ‘Subtitle:’, respectively.

- Messages

Errors, warnings, and notes are printed the same way as text.

- Charts

Charts are not included in CSV output.

Successive output items are separated by a blank line.

4 Invoking psppire

4.1 The graphic user interface

The PSPPIRE graphic user interface for PSPP can perform all functionality of the command line interface. In addition it gives an instantaneous view of the data, variables and statistical output.

The graphic user interface can be started by typing psppire at a

command prompt.

Alternatively many systems have a system of interactive menus or buttons

from which psppire can be started by a series of mouse clicks.

Once the principles of the PSPP system are understood, the graphic user interface is designed to be largely intuitive, and for this reason is covered only very briefly by this manual.

5 Using PSPP

PSPP is a tool for the statistical analysis of sampled data. You can use it to discover patterns in the data, to explain differences in one subset of data in terms of another subset and to find out whether certain beliefs about the data are justified. This chapter does not attempt to introduce the theory behind the statistical analysis, but it shows how such analysis can be performed using PSPP.

For the purposes of this tutorial, it is assumed that you are using PSPP in its interactive mode from the command line. However, the example commands can also be typed into a file and executed in a post-hoc mode by typing ‘pspp file-name’ at a shell prompt, where file-name is the name of the file containing the commands. Alternatively, from the graphical interface, you can select File → New → Syntax to open a new syntax window and use the Run menu when a syntax fragment is ready to be executed. Whichever method you choose, the syntax is identical.

When using the interactive method, PSPP tells you that it’s waiting for your data with a string like PSPP> or data>. In the examples of this chapter, whenever you see text like this, it indicates the prompt displayed by PSPP, not something that you should type.

Throughout this chapter reference is made to a number of sample data files. So that you can try the examples for yourself, you should have received these files along with your copy of PSPP.1

Please note: Normally these files are installed in the directory //share/pspp/examples. If however your system administrator or operating system vendor has chosen to install them in a different location, you will have to adjust the examples accordingly.

5.1 Preparation of Data Files

Before analysis can commence, the data must be loaded into PSPP and arranged such that both PSPP and humans can understand what the data represents. There are two aspects of data:

- The variables — these are the parameters of a quantity which has been measured or estimated in some way. For example height, weight and geographic location are all variables.

- The observations (also called ‘cases’) of the variables — each observation represents an instance when the variables were measured or observed.

For example, a data set which has the variables height, weight, and name, might have the observations:

1881 89.2 Ahmed 1192 107.01 Frank 1230 67 Julie

The following sections explain how to define a dataset.

- Defining Variables

- Listing the data

- Reading data from a text file

- Reading data from a pre-prepared PSPP file

- Saving data to a PSPP file.

- Reading data from other sources

- Exiting PSPP

5.1.1 Defining Variables

Variables come in two basic types, viz: numeric and string. Variables such as age, height and satisfaction are numeric, whereas name is a string variable. String variables are best reserved for commentary data to assist the human observer. However they can also be used for nominal or categorical data.

The following example defines two variables forename and height, and reads data into them by manual input:

PSPP> data list list /forename (A12) height. PSPP> begin data. data> Ahmed 188 data> Bertram 167 data> Catherine 134.231 data> David 109.1 data> end data PSPP>

There are several things to note about this example.

- The words ‘data list list’ are an example of the

DATA LISTcommand. See DATA LIST. It tells PSPP to prepare for reading data. The word ‘list’ intentionally appears twice. The first occurrence is part of theDATA LISTcall, whilst the second tells PSPP that the data is to be read as free format data with one record per line. - The ‘/’ character is important. It marks the start of the list of variables which you wish to define.

- The text ‘forename’ is the name of the first variable, and ‘(A12)’ says that the variable forename is a string variable and that its maximum length is 12 bytes. The second variable’s name is specified by the text ‘height’. Since no format is given, this variable has the default format. Normally the default format expects numeric data, which should be entered in the locale of the operating system. Thus, the example is correct for English locales and other locales which use a period (‘.’) as the decimal separator. However if you are using a system with a locale which uses the comma (‘,’) as the decimal separator, then you should in the subsequent lines substitute ‘.’ with ‘,’. Alternatively, you could explicitly tell PSPP that the height variable is to be read using a period as its decimal separator by appending the text ‘DOT8.3’ after the word ‘height’. For more information on data formats, see Input and Output Formats.

- Normally, PSPP displays the prompt PSPP> whenever it’s expecting a command. However, when it’s expecting data, the prompt changes to data> so that you know to enter data and not a command.

- At the end of every command there is a terminating ‘.’ which tells PSPP that the end of a command has been encountered. You should not enter ‘.’ when data is expected (ie. when the data> prompt is current) since it is appropriate only for terminating commands.

5.1.2 Listing the data

Once the data has been entered, you could type

PSPP> list /format=numbered.

to list the data. The optional text ‘/format=numbered’ requests the case numbers to be shown along with the data. It should show the following output:

|

Note that the numeric variable height is displayed to 2 decimal

places, because the format for that variable is ‘F8.2’.

For a complete description of the LIST command, see LIST.

5.1.3 Reading data from a text file

The previous example showed how to define a set of variables and to manually enter the data for those variables. Manual entering of data is tedious work, and often a file containing the data will be have been previously prepared. Let us assume that you have a file called mydata.dat containing the ascii encoded data:

Ahmed 188.00 Bertram 167.00 Catherine 134.23 David 109.10 . . . Zachariah 113.02

You can can tell the DATA LIST command to read the data directly from

this file instead of by manual entry, with a command like:

PSPP> data list file='mydata.dat' list /forename (A12) height.

Notice however, that it is still necessary to specify the names of the variables and their formats, since this information is not contained in the file. It is also possible to specify the file’s character encoding and other parameters. For full details refer to see DATA LIST.

5.1.4 Reading data from a pre-prepared PSPP file

When working with other PSPP users, or users of other software which uses the PSPP data format, you may be given the data in a pre-prepared PSPP file. Such files contain not only the data, but the variable definitions, along with their formats, labels and other meta-data. Conventionally, these files (sometimes called “system” files) have the suffix .sav, but that is not mandatory. The following syntax loads a file called my-file.sav.

PSPP> get file='my-file.sav'.

You will encounter several instances of this in future examples.

5.1.5 Saving data to a PSPP file.

If you want to save your data, along with the variable definitions so

that you or other PSPP users can use it later, you can do this with

the SAVE command.

The following syntax will save the existing data and variables to a file called my-new-file.sav.

PSPP> save outfile='my-new-file.sav'.

If my-new-file.sav already exists, then it will be overwritten. Otherwise it will be created.

5.1.6 Reading data from other sources

Sometimes it’s useful to be able to read data from comma

separated text, from spreadsheets, databases or other sources.

In these instances you should

use the GET DATA command (see GET DATA).

5.1.7 Exiting PSPP

Use the FINISH command to exit PSPP:

PSPP> finish.

5.2 Data Screening and Transformation

Once data has been entered, it is often desirable, or even necessary, to transform it in some way before performing analysis upon it. At the very least, it’s good practice to check for errors.

- Identifying incorrect data

- Dealing with suspicious data

- Inverting negatively coded variables

- Testing data consistency

- Testing for normality

5.2.1 Identifying incorrect data

Data from real sources is rarely error free. PSPP has a number of procedures which can be used to help identify data which might be incorrect.

The DESCRIPTIVES command (see DESCRIPTIVES) is used to generate

simple linear statistics for a dataset. It is also useful for

identifying potential problems in the data.

The example file physiology.sav contains a number of physiological

measurements of a sample of healthy adults selected at random.

However, the data entry clerk made a number of mistakes when entering

the data.

The following example illustrates the use of DESCRIPTIVES to screen this

data and identify the erroneous values:

PSPP> get file='//share/pspp/examples/physiology.sav'. PSPP> descriptives sex, weight, height.

For this example, PSPP produces the following output:

|

The most interesting column in the output is the minimum value. The weight variable has a minimum value of less than zero, which is clearly erroneous. Similarly, the height variable’s minimum value seems to be very low. In fact, it is more than 5 standard deviations from the mean, and is a seemingly bizarre height for an adult person.

We can look deeper into these discrepancies by issuing an additional

EXAMINE command:

PSPP> examine height, weight /statistics=extreme(3).

This command produces the following additional output (in part):

|

From this new output, you can see that the lowest value of height is

179 (which we suspect to be erroneous), but the second lowest is 1598

which

we know from DESCRIPTIVES

is within 1 standard deviation from the mean.

Similarly, the lowest value of weight is

negative, but its second lowest value is plausible.

This suggests that the two extreme values are outliers and probably

represent data entry errors.

The output also identifies the case numbers for each extreme value, so we can see that cases 30 and 38 are the ones with the erroneous values.

5.2.2 Dealing with suspicious data

If possible, suspect data should be checked and re-measured.

However, this may not always be feasible, in which case the researcher may

decide to disregard these values.

PSPP has a feature whereby data can assume the special value ‘SYSMIS’, and

will be disregarded in future analysis. See Handling missing observations.

You can set the two suspect values to the ‘SYSMIS’ value using the RECODE

command.

PSPP> recode height (179 = SYSMIS). PSPP> recode weight (LOWEST THRU 0 = SYSMIS).

The first command says that for any observation which has a

height value of 179, that value should be changed to the SYSMIS

value.

The second command says that any weight values of zero or less

should be changed to SYSMIS.

From now on, they will be ignored in analysis.

For detailed information about the RECODE command see RECODE.

If you now re-run the DESCRIPTIVES or EXAMINE commands from

the previous section,

you will see a data summary with more plausible parameters.

You will also notice that the data summaries indicate the two missing values.

5.2.3 Inverting negatively coded variables

Data entry errors are not the only reason for wanting to recode data. The sample file hotel.sav comprises data gathered from a customer satisfaction survey of clients at a particular hotel. The following commands load the file and display its variables and associated data:

PSPP> get file='//share/pspp/examples/hotel.sav'. PSPP> display dictionary.

It yields the following output:

| ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

The output shows that all of the variables v1 through v5 are measured on a 5 point Likert scale,

with 1 meaning “Strongly disagree” and 5 meaning “Strongly agree”.

However, some of the questions are positively worded (v1, v2, v4) and others are negatively worded (v3, v5).

To perform meaningful analysis, we need to recode the variables so

that they all measure in the same direction.

We could use the RECODE command, with syntax such as:

recode v3 (1 = 5) (2 = 4) (4 = 2) (5 = 1).

However an easier and more elegant way uses the COMPUTE

command (see COMPUTE).

Since the variables are Likert variables in the range (1 … 5),

subtracting their value from 6 has the effect of inverting them:

compute var = 6 - var.

The following section uses this technique to recode the variables

v3 and v5.

After applying COMPUTE for both variables,

all subsequent commands will use the inverted values.

5.2.4 Testing data consistency

A sensible check to perform on survey data is the calculation of

reliability.

This gives the statistician some confidence that the questionnaires have been

completed thoughtfully.

If you examine the labels of variables v1, v3 and v4,

you will notice that they ask very similar questions.

One would therefore expect the values of these variables (after recoding)

to closely follow one another, and we can test that with the RELIABILITY

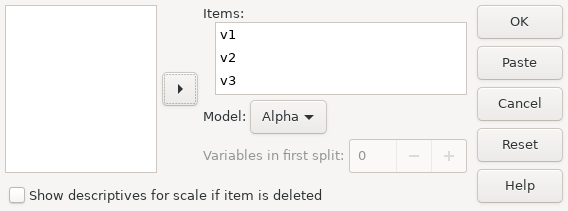

command (see RELIABILITY).

The following example shows a PSPP session where the user recodes

negatively scaled variables and then requests reliability statistics for

v1, v3, and v4.

PSPP> get file='//share/pspp/examples/hotel.sav'. PSPP> compute v3 = 6 - v3. PSPP> compute v5 = 6 - v5. PSPP> reliability v1, v3, v4.

This yields the following output:

|

Scale: ANY

|

As a rule of thumb, many statisticians consider a value of Cronbach’s Alpha of 0.7 or higher to indicate reliable data.

Here, the value is 0.81, which suggests a high degree of reliability among variables v1, v3 and v4, so the data and the recoding that we performed are vindicated.

5.2.5 Testing for normality

Many statistical tests rely upon certain properties of the data.

One common property, upon which many linear tests depend, is that of

normality — the data must have been drawn from a normal distribution.

It is necessary then to ensure normality before deciding upon the

test procedure to use. One way to do this uses the EXAMINE command.

In the following example, a researcher was examining the failure rates of equipment produced by an engineering company. The file repairs.sav contains the mean time between failures (mtbf) of some items of equipment subject to the study. Before performing linear analysis on the data, the researcher wanted to ascertain that the data is normally distributed.

PSPP> get file='//share/pspp/examples/repairs.sav'. PSPP> examine mtbf /statistics=descriptives.

This produces the following output:

| |||||||||||||||||||||||||||||||||||||||||||||||||||||||||

A normal distribution has a skewness and kurtosis of zero. The skewness of mtbf in the output above makes it clear that the mtbf figures have a lot of positive skew and are therefore not drawn from a normally distributed variable. Positive skew can often be compensated for by applying a logarithmic transformation, as in the following continuation of the example:

PSPP> compute mtbf_ln = ln (mtbf). PSPP> examine mtbf_ln /statistics=descriptives.

which produces the following additional output:

| |||||||||||||||||||||||||||||||||||||||||||||||||||||||||

The COMPUTE command in the first line above performs the

logarithmic transformation:

compute mtbf_ln = ln (mtbf).

Rather than redefining the existing variable, this use of COMPUTE

defines a new variable mtbf_ln which is

the natural logarithm of mtbf.

The final command in this example calls EXAMINE on this new variable.

The results show that both the skewness and

kurtosis for mtbf_ln are very close to zero.

This provides some confidence that the mtbf_ln variable is

normally distributed and thus safe for linear analysis.

In the event that no suitable transformation can be found,

then it would be worth considering

an appropriate non-parametric test instead of a linear one.

See NPAR TESTS, for information about non-parametric tests.

5.3 Hypothesis Testing

One of the most fundamental purposes of statistical analysis is hypothesis testing. Researchers commonly need to test hypotheses about a set of data. For example, she might want to test whether one set of data comes from the same distribution as another, or whether the mean of a dataset significantly differs from a particular value. This section presents just some of the possible tests that PSPP offers.

The researcher starts by making a null hypothesis. Often this is a hypothesis which he suspects to be false. For example, if he suspects that A is greater than B he will state the null hypothesis as A = B.2

The p-value is a recurring concept in hypothesis testing. It is the highest acceptable probability that the evidence implying a null hypothesis is false, could have been obtained when the null hypothesis is in fact true. Note that this is not the same as “the probability of making an error” nor is it the same as “the probability of rejecting a hypothesis when it is true”.

5.3.1 Testing for differences of means

A common statistical test involves hypotheses about means.

The T-TEST command is used to find out whether or not two separate

subsets have the same mean.

A researcher suspected that the heights and core body temperature of persons might be different depending upon their sex. To investigate this, he posed two null hypotheses based on the data from physiology.sav previously encountered:

- The mean heights of males and females in the population are equal.

- The mean body temperature of males and females in the population are equal.

For the purposes of the investigation the researcher decided to use a p-value of 0.05.

In addition to the T-test, the T-TEST command also performs the

Levene test for equal variances.

If the variances are equal, then a more powerful form of the T-test can be used.

However if it is unsafe to assume equal variances,

then an alternative calculation is necessary.

PSPP performs both calculations.

For the height variable, the output shows the significance of the Levene test to be 0.33 which means there is a 33% probability that the Levene test produces this outcome when the variances are equal. Had the significance been less than 0.05, then it would have been unsafe to assume that the variances were equal. However, because the value is higher than 0.05 the homogeneity of variances assumption is safe and the “Equal Variances” row (the more powerful test) can be used. Examining this row, the two tailed significance for the height t-test is less than 0.05, so it is safe to reject the null hypothesis and conclude that the mean heights of males and females are unequal.

For the temperature variable, the significance of the Levene test is 0.58 so again, it is safe to use the row for equal variances. The equal variances row indicates that the two tailed significance for temperature is 0.20. Since this is greater than 0.05 we must reject the null hypothesis and conclude that there is insufficient evidence to suggest that the body temperature of male and female persons are different.

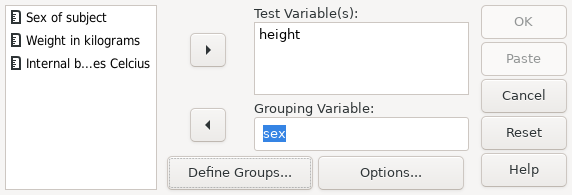

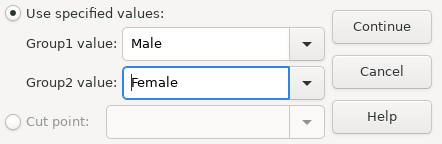

The syntax for this analysis is:

PSPP> get file='//share/pspp/examples/physiology.sav'. PSPP> recode height (179 = SYSMIS). PSPP> t-test group=sex(0,1) /variables = height temperature.

PSPP produces the following output for this syntax:

| ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

The T-TEST command tests for differences of means.

Here, the height variable’s two tailed significance is less than

0.05, so the null hypothesis can be rejected.

Thus, the evidence suggests there is a difference between the heights of

male and female persons.

However the significance of the test for the temperature

variable is greater than 0.05 so the null hypothesis cannot be

rejected, and there is insufficient evidence to suggest a difference

in body temperature.

5.3.2 Linear Regression

Linear regression is a technique used to investigate if and how a variable is linearly related to others. If a variable is found to be linearly related, then this can be used to predict future values of that variable.

In the following example, the service department of the company wanted to

be able to predict the time to repair equipment, in order to improve

the accuracy of their quotations.

It was suggested that the time to repair might be related to the time

between failures and the duty cycle of the equipment.

The p-value of 0.1 was chosen for this investigation.

In order to investigate this hypothesis, the REGRESSION command

was used.

This command not only tests if the variables are related, but also

identifies the potential linear relationship. See REGRESSION.

A first attempt includes duty_cycle:

PSPP> get file='//share/pspp/examples/repairs.sav'. PSPP> regression /variables = mtbf duty_cycle /dependent = mttr.

This attempt yields the following output (in part):

| ||||||||||||||||||||||||||||

The coefficients in the above table suggest that the formula mttr = 9.81 + 3.1 \times mtbf + 1.09 \times duty_cycle can be used to predict the time to repair. However, the significance value for the duty_cycle coefficient is very high, which would make this an unsafe predictor. For this reason, the test was repeated, but omitting the duty_cycle variable:

PSPP> regression /variables = mtbf /dependent = mttr.

This second try produces the following output (in part):

| ||||||||||||||||||||||

This time, the significance of all coefficients is no higher than 0.06, suggesting that at the 0.06 level, the formula mttr = 10.5 + 3.11 \times mtbf is a reliable predictor of the time to repair.

6 The PSPP language

This chapter discusses elements common to many PSPP commands. Later chapters describe individual commands in detail.

- Tokens

- Forming commands of tokens

- Syntax Variants

- Types of Commands

- Order of Commands

- Handling missing observations

- Datasets

- Files Used by PSPP

- File Handles

- Backus-Naur Form

6.1 Tokens

PSPP divides most syntax file lines into series of short chunks called tokens. Tokens are then grouped to form commands, each of which tells PSPP to take some action—read in data, write out data, perform a statistical procedure, etc. Each type of token is described below.

- Identifiers

Identifiers are names that typically specify variables, commands, or subcommands. The first character in an identifier must be a letter, ‘#’, or ‘@’. The remaining characters in the identifier must be letters, digits, or one of the following special characters:

. _ $ # @Identifiers may be any length, but only the first 64 bytes are significant. Identifiers are not case-sensitive:

foobar,Foobar,FooBar,FOOBAR, andFoObaRare different representations of the same identifier.Some identifiers are reserved. Reserved identifiers may not be used in any context besides those explicitly described in this manual. The reserved identifiers are:

ALL AND BY EQ GE GT LE LT NE NOT OR TO WITH- Keywords

Keywords are a subclass of identifiers that form a fixed part of command syntax. For example, command and subcommand names are keywords. Keywords may be abbreviated to their first 3 characters if this abbreviation is unambiguous. (Unique abbreviations of 3 or more characters are also accepted: ‘FRE’, ‘FREQ’, and ‘FREQUENCIES’ are equivalent when the last is a keyword.)

Reserved identifiers are always used as keywords. Other identifiers may be used both as keywords and as user-defined identifiers, such as variable names.

- Numbers ¶

-

Numbers are expressed in decimal. A decimal point is optional. Numbers may be expressed in scientific notation by adding ‘e’ and a base-10 exponent, so that ‘1.234e3’ has the value 1234. Here are some more examples of valid numbers:

-5 3.14159265359 1e100 -.707 8945.

Negative numbers are expressed with a ‘-’ prefix. However, in situations where a literal ‘-’ token is expected, what appears to be a negative number is treated as ‘-’ followed by a positive number.

No white space is allowed within a number token, except for horizontal white space between ‘-’ and the rest of the number.

The last example above, ‘8945.’ is interpreted as two tokens, ‘8945’ and ‘.’, if it is the last token on a line. See Forming commands of tokens.

- Strings ¶

-

Strings are literal sequences of characters enclosed in pairs of single quotes (‘'’) or double quotes (‘"’). To include the character used for quoting in the string, double it, e.g. ‘'it''s an apostrophe'’. White space and case of letters are significant inside strings.

Strings can be concatenated using ‘+’, so that ‘"a" + 'b' + 'c'’ is equivalent to ‘'abc'’. So that a long string may be broken across lines, a line break may precede or follow, or both precede and follow, the ‘+’. (However, an entirely blank line preceding or following the ‘+’ is interpreted as ending the current command.)

Strings may also be expressed as hexadecimal character values by prefixing the initial quote character by ‘x’ or ‘X’. Regardless of the syntax file or active dataset’s encoding, the hexadecimal digits in the string are interpreted as Unicode characters in UTF-8 encoding.

Individual Unicode code points may also be expressed by specifying the hexadecimal code point number in single or double quotes preceded by ‘u’ or ‘U’. For example, Unicode code point U+1D11E, the musical G clef character, could be expressed as

U'1D11E'. Invalid Unicode code points (above U+10FFFF or in between U+D800 and U+DFFF) are not allowed.When strings are concatenated with ‘+’, each segment’s prefix is considered individually. For example,

'The G clef symbol is:' + u"1d11e" + "."inserts a G clef symbol in the middle of an otherwise plain text string. - Punctuators and Operators ¶

-

These tokens are the punctuators and operators:

, / = ( ) + - * / ** < <= <> > >= ~= & | .Most of these appear within the syntax of commands, but the period (‘.’) punctuator is used only at the end of a command. It is a punctuator only as the last character on a line (except white space). When it is the last non-space character on a line, a period is not treated as part of another token, even if it would otherwise be part of, e.g., an identifier or a floating-point number.

6.2 Forming commands of tokens

Most PSPP commands share a common structure. A command begins with a

command name, such as FREQUENCIES, DATA LIST, or N OF

CASES. The command name may be abbreviated to its first word, and

each word in the command name may be abbreviated to its first three

or more characters, where these abbreviations are unambiguous.

The command name may be followed by one or more subcommands. Each subcommand begins with a subcommand name, which may be abbreviated to its first three letters. Some subcommands accept a series of one or more specifications, which follow the subcommand name, optionally separated from it by an equals sign (‘=’). Specifications may be separated from each other by commas or spaces. Each subcommand must be separated from the next (if any) by a forward slash (‘/’).

There are multiple ways to mark the end of a command. The most common way is to end the last line of the command with a period (‘.’) as described in the previous section (see Tokens). A blank line, or one that consists only of white space or comments, also ends a command.

6.3 Syntax Variants

There are three variants of command syntax, which vary only in how they detect the end of one command and the start of the next.

In interactive mode, which is the default for syntax typed at a command prompt, a period as the last non-blank character on a line ends a command. A blank line also ends a command.

In batch mode, an end-of-line period or a blank line also ends a command. Additionally, it treats any line that has a non-blank character in the leftmost column as beginning a new command. Thus, in batch mode the second and subsequent lines in a command must be indented.

Regardless of the syntax mode, a plus sign, minus sign, or period in the leftmost column of a line is ignored and causes that line to begin a new command. This is most useful in batch mode, in which the first line of a new command could not otherwise be indented, but it is accepted regardless of syntax mode.

The default mode for reading commands from a file is auto mode. It is the same as batch mode, except that a line with a non-blank in the leftmost column only starts a new command if that line begins with the name of a PSPP command. This correctly interprets most valid PSPP syntax files regardless of the syntax mode for which they are intended.

The --interactive (or -i) or --batch (or -b) options set the syntax mode for files listed on the PSPP command line. See Main Options, for more details.

6.4 Types of Commands

Commands in PSPP are divided roughly into six categories:

- Utility commands ¶

Set or display various global options that affect PSPP operations. May appear anywhere in a syntax file. See Utility commands.

- File definition commands ¶

Give instructions for reading data from text files or from special binary “system files”. Most of these commands replace any previous data or variables with new data or variables. At least one file definition command must appear before the first command in any of the categories below. See Data Input and Output.

- Input program commands ¶

Though rarely used, these provide tools for reading data files in arbitrary textual or binary formats. See INPUT PROGRAM.

- Transformations ¶

Perform operations on data and write data to output files. Transformations are not carried out until a procedure is executed.

- Restricted transformations ¶

Transformations that cannot appear in certain contexts. See Order of Commands, for details.

- Procedures ¶

Analyze data, writing results of analyses to the listing file. Cause transformations specified earlier in the file to be performed. In a more general sense, a procedure is any command that causes the active dataset (the data) to be read.

6.5 Order of Commands

PSPP does not place many restrictions on ordering of commands. The main restriction is that variables must be defined before they are otherwise referenced. This section describes the details of command ordering, but most users will have no need to refer to them.

PSPP possesses five internal states, called initial, input-program

file-type, transformation, and procedure states. (Please note the

distinction between the INPUT PROGRAM and FILE TYPE

commands and the input-program and file-type states.)

PSPP starts in the initial state. Each successful completion of a command may cause a state transition. Each type of command has its own rules for state transitions:

- Utility commands

- Valid in any state.

- Do not cause state transitions. Exception: when

N OF CASESis executed in the procedure state, it causes a transition to the transformation state.

DATA LIST- Valid in any state.

- When executed in the initial or procedure state, causes a transition to the transformation state.

- Clears the active dataset if executed in the procedure or transformation state.

INPUT PROGRAM- Invalid in input-program and file-type states.

- Causes a transition to the intput-program state.

- Clears the active dataset.

FILE TYPE- Invalid in intput-program and file-type states.

- Causes a transition to the file-type state.

- Clears the active dataset.

- Other file definition commands

- Invalid in input-program and file-type states.

- Cause a transition to the transformation state.

- Clear the active dataset, except for

ADD FILES,MATCH FILES, andUPDATE.

- Transformations

- Invalid in initial and file-type states.

- Cause a transition to the transformation state.

- Restricted transformations

- Invalid in initial, input-program, and file-type states.

- Cause a transition to the transformation state.

- Procedures

- Invalid in initial, input-program, and file-type states.

- Cause a transition to the procedure state.

6.6 Handling missing observations

PSPP includes special support for unknown numeric data values. Missing observations are assigned a special value, called the system-missing value. This “value” actually indicates the absence of a value; it means that the actual value is unknown. Procedures automatically exclude from analyses those observations or cases that have missing values. Details of missing value exclusion depend on the procedure and can often be controlled by the user; refer to descriptions of individual procedures for details.

The system-missing value exists only for numeric variables. String variables always have a defined value, even if it is only a string of spaces.

Variables, whether numeric or string, can have designated user-missing values. Every user-missing value is an actual value for that variable. However, most of the time user-missing values are treated in the same way as the system-missing value.

For more information on missing values, see the following sections: Datasets, MISSING VALUES, Mathematical Expressions. See also the documentation on individual procedures for information on how they handle missing values.

6.7 Datasets

PSPP works with data organized into datasets. A dataset consists of a set of variables, which taken together are said to form a dictionary, and one or more cases, each of which has one value for each variable.

At any given time PSPP has exactly one distinguished dataset, called

the active dataset. Most PSPP commands work only with the

active dataset. In addition to the active dataset, PSPP also supports

any number of additional open datasets. The DATASET commands

can choose a new active dataset from among those that are open, as

well as create and destroy datasets (see DATASET commands).

The sections below describe variables in more detail.

- Attributes of Variables

- Variables Automatically Defined by PSPP

- Lists of variable names

- Input and Output Formats

- Scratch Variables

6.7.1 Attributes of Variables

Each variable has a number of attributes, including:

- Name

An identifier, up to 64 bytes long. Each variable must have a different name. See Tokens.

Some system variable names begin with ‘$’, but user-defined variables’ names may not begin with ‘$’.

The final character in a variable name should not be ‘.’, because such an identifier will be misinterpreted when it is the final token on a line:

FOO.is divided into two separate tokens, ‘FOO’ and ‘.’, indicating end-of-command. See Tokens.The final character in a variable name should not be ‘_’, because some such identifiers are used for special purposes by PSPP procedures.

As with all PSPP identifiers, variable names are not case-sensitive. PSPP capitalizes variable names on output the same way they were capitalized at their point of definition in the input.

- Type

Numeric or string.

- Width

(string variables only) String variables with a width of 8 characters or fewer are called short string variables. Short string variables may be used in a few contexts where long string variables (those with widths greater than 8) are not allowed.

- Position

Variables in the dictionary are arranged in a specific order.

DISPLAYcan be used to show this order: see DISPLAY.- Initialization

Either reinitialized to 0 or spaces for each case, or left at its existing value. See LEAVE.

- Missing values

Optionally, up to three values, or a range of values, or a specific value plus a range, can be specified as user-missing values. There is also a system-missing value that is assigned to an observation when there is no other obvious value for that observation. Observations with missing values are automatically excluded from analyses. User-missing values are actual data values, while the system-missing value is not a value at all. See Handling missing observations.

- Variable label

A string that describes the variable. See VARIABLE LABELS.

- Value label

Optionally, these associate each possible value of the variable with a string. See VALUE LABELS.

- Print format

Display width, format, and (for numeric variables) number of decimal places. This attribute does not affect how data are stored, just how they are displayed. Example: a width of 8, with 2 decimal places. See Input and Output Formats.

- Write format

Similar to print format, but used by the

WRITEcommand (see WRITE).- Measurement level

One of the following:

- Nominal

Each value of a nominal variable represents a distinct category. The possible categories are finite and often have value labels. The order of categories is not significant. Political parties, US states, and yes/no choices are nominal. Numeric and string variables can be nominal.

- Ordinal

Ordinal variables also represent distinct categories, but their values are arranged according to some natural order. Likert scales, e.g. from strongly disagree to strongly agree, are ordinal. Data grouped into ranges, e.g. age groups or income groups, are ordinal. Both numeric and string variables can be ordinal. String values are ordered alphabetically, so letter grades from A to F will work as expected, but

poor,satisfactory,excellentwill not.- Scale

Scale variables are ones for which differences and ratios are meaningful. These are often values which have a natural unit attached, such as age in years, income in dollars, or distance in miles. Only numeric variables are scalar.

Variables created by

COMPUTEand similar transformations, obtained from external sources, etc., initially have an unknown measurement level. Any procedure that reads the data will then assign a default measurement level. PSPP can assign some defaults without reading the data:- Nominal, if it’s a string variable.

- Nominal, if the variable has a WKDAY or MONTH print format.

- Scale, if the variable has a DOLLAR, CCA through CCE, or time or date print format.

Otherwise, PSPP reads the data and decides based on its distribution:

- Nominal, if all observations are missing.

- Scale, if one or more valid observations are noninteger or negative.

- Scale, if no valid observation is less than 10.

- Scale, if the variable has 24 or more unique valid values. The value 24 is the default and can be adjusted (see SET SCALEMIN).

Finally, if none of the above is true, PSPP assigns the variable a nominal measurement level.

- Custom attributes

User-defined associations between names and values. See VARIABLE ATTRIBUTE.

- Role

The intended role of a variable for use in dialog boxes in graphical user interfaces. See VARIABLE ROLE.

6.7.2 Variables Automatically Defined by PSPP

There are seven system variables. These are not like ordinary variables because system variables are not always stored. They can be used only in expressions. These system variables, whose values and output formats cannot be modified, are described below.

$CASENUMCase number of the case at the moment. This changes as cases are shuffled around.

$DATEDate the PSPP process was started, in format A9, following the pattern

DD-MMM-YY.$DATE11Date the PSPP process was started, in format A11, following the pattern

DD-MMM-YYYY.$JDATENumber of days between 15 Oct 1582 and the time the PSPP process was started.

$LENGTHPage length, in lines, in format F11.

$SYSMISSystem missing value, in format F1.

$TIMENumber of seconds between midnight 14 Oct 1582 and the time the active dataset was read, in format F20.

$WIDTHPage width, in characters, in format F3.

6.7.3 Lists of variable names

To refer to a set of variables, list their names one after another.

Optionally, their names may be separated by commas. To include a

range of variables from the dictionary in the list, write the name of

the first and last variable in the range, separated by TO. For

instance, if the dictionary contains six variables with the names

ID, X1, X2, GOAL, MET, and

NEXTGOAL, in that order, then X2 TO MET would include

variables X2, GOAL, and MET.

Commands that define variables, such as DATA LIST, give

TO an alternate meaning. With these commands, TO define

sequences of variables whose names end in consecutive integers. The

syntax is two identifiers that begin with the same root and end with

numbers, separated by TO. The syntax X1 TO X5 defines 5

variables, named X1, X2, X3, X4, and

X5. The syntax ITEM0008 TO ITEM0013 defines 6

variables, named ITEM0008, ITEM0009, ITEM0010,

ITEM0011, ITEM0012, and ITEM00013. The syntaxes

QUES001 TO QUES9 and QUES6 TO QUES3 are invalid.

After a set of variables has been defined with DATA LIST or

another command with this method, the same set can be referenced on

later commands using the same syntax.

6.7.4 Input and Output Formats

An input format describes how to interpret the contents of an

input field as a number or a string. It might specify that the field

contains an ordinary decimal number, a time or date, a number in binary

or hexadecimal notation, or one of several other notations. Input

formats are used by commands such as DATA LIST that read data or

syntax files into the PSPP active dataset.

Every input format corresponds to a default output format that specifies the formatting used when the value is output later. It is always possible to explicitly specify an output format that resembles the input format. Usually, this is the default, but in cases where the input format is unfriendly to human readability, such as binary or hexadecimal formats, the default output format is an easier-to-read decimal format.

Every variable has two output formats, called its print format and

write format. Print formats are used in most output contexts;

write formats are used only by WRITE (see WRITE). Newly

created variables have identical print and write formats, and

FORMATS, the most commonly used command for changing formats

(see FORMATS), sets both of them to the same value as well. Thus,

most of the time, the distinction between print and write formats is

unimportant.

Input and output formats are specified to PSPP with

a format specification of the

form TYPEw or TYPEw.d, where

TYPE is one of the format types described later, w is a

field width measured in columns, and d is an optional number of

decimal places. If d is omitted, a value of 0 is assumed. Some

formats do not allow a nonzero d to be specified.

The following sections describe the input and output formats supported by PSPP.

- Basic Numeric Formats

- Custom Currency Formats

- Legacy Numeric Formats

- Binary and Hexadecimal Numeric Formats

- Time and Date Formats

- Date Component Formats

- String Formats

6.7.4.1 Basic Numeric Formats

The basic numeric formats are used for input and output of real numbers in standard or scientific notation. The following table shows an example of how each format displays positive and negative numbers with the default decimal point setting:

| Format | 3141.59 | -3141.59 |

|---|---|---|

| F8.2 | 3141.59 | -3141.59 |

| COMMA9.2 | 3,141.59 | -3,141.59 |

| DOT9.2 | 3.141,59 | -3.141,59 |

| DOLLAR10.2 | $3,141.59 | -$3,141.59 |

| PCT9.2 | 3141.59% | -3141.59% |

| E8.1 | 3.1E+003 | -3.1E+003 |

On output, numbers in F format are expressed in standard decimal notation with the requested number of decimal places. The other formats output some variation on this style:

- Numbers in COMMA format are additionally grouped every three digits by inserting a grouping character. The grouping character is ordinarily a comma, but it can be changed to a period (see SET DECIMAL).

- DOT format is like COMMA format, but it interchanges the role of the decimal point and grouping characters. That is, the current grouping character is used as a decimal point and vice versa.

- DOLLAR format is like COMMA format, but it prefixes the number with ‘$’.

- PCT format is like F format, but adds ‘%’ after the number.

- The E format always produces output in scientific notation.

On input, the basic numeric formats accept positive and numbers in standard decimal notation or scientific notation. Leading and trailing spaces are allowed. An empty or all-spaces field, or one that contains only a single period, is treated as the system missing value.

In scientific notation, the exponent may be introduced by a sign (‘+’ or ‘-’), or by one of the letters ‘e’ or ‘d’ (in uppercase or lowercase), or by a letter followed by a sign. A single space may follow the letter or the sign or both.

On fixed-format DATA LIST (see DATA LIST FIXED) and in a few

other contexts, decimals are implied when the field does not contain a

decimal point. In F6.5 format, for example, the field 314159 is

taken as the value 3.14159 with implied decimals. Decimals are never

implied if an explicit decimal point is present or if scientific

notation is used.

E and F formats accept the basic syntax already described. The other formats allow some additional variations:

- COMMA, DOLLAR, and DOT formats ignore grouping characters within the integer part of the input field. The identity of the grouping character depends on the format.

- DOLLAR format allows a dollar sign to precede the number. In a negative number, the dollar sign may precede or follow the minus sign.

- PCT format allows a percent sign to follow the number.

All of the basic number formats have a maximum field width of 40 and accept no more than 16 decimal places, on both input and output. Some additional restrictions apply:

- As input formats, the basic numeric formats allow no more decimal places than the field width. As output formats, the field width must be greater than the number of decimal places; that is, large enough to allow for a decimal point and the number of requested decimal places. DOLLAR and PCT formats must allow an additional column for ‘$’ or ‘%’.

- The default output format for a given input format increases the field width enough to make room for optional input characters. If an input format calls for decimal places, the width is increased by 1 to make room for an implied decimal point. COMMA, DOT, and DOLLAR formats also increase the output width to make room for grouping characters. DOLLAR and PCT further increase the output field width by 1 to make room for ‘$’ or ‘%’. The increased output width is capped at 40, the maximum field width.

- The E format is exceptional. For output, E format has a minimum width of 7 plus the number of decimal places. The default output format for an E input format is an E format with at least 3 decimal places and thus a minimum width of 10.

More details of basic numeric output formatting are given below:

- Output rounds to nearest, with ties rounded away from zero. Thus, 2.5

is output as

3in F1.0 format, and -1.125 as-1.13in F5.1 format. - The system-missing value is output as a period in a field of spaces, placed in the decimal point’s position, or in the rightmost column if no decimal places are requested. A period is used even if the decimal point character is a comma.

- A number that does not fill its field is right-justified within the field.

- A number is too large for its field causes decimal places to be dropped to make room. If dropping decimals does not make enough room, scientific notation is used if the field is wide enough. If a number does not fit in the field, even in scientific notation, the overflow is indicated by filling the field with asterisks (‘*’).

- COMMA, DOT, and DOLLAR formats insert grouping characters only if space is available for all of them. Grouping characters are never inserted when all decimal places must be dropped. Thus, 1234.56 in COMMA5.2 format is output as ‘ 1235’ without a comma, even though there is room for one, because all decimal places were dropped.

- DOLLAR or PCT format drop the ‘$’ or ‘%’ only if the number would not fit at all without it. Scientific notation with ‘$’ or ‘%’ is preferred to ordinary decimal notation without it.

- Except in scientific notation, a decimal point is included only when

it is followed by a digit. If the integer part of the number being

output is 0, and a decimal point is included, then PSPP ordinarily

drops the zero before the decimal point. However, in

F,COMMA, orDOTformats, PSPP keeps the zero ifSET LEADZEROis set toON(see SET LEADZERO).In scientific notation, the number always includes a decimal point, even if it is not followed by a digit.

- A negative number includes a minus sign only in the presence of a nonzero digit: -0.01 is output as ‘-.01’ in F4.2 format but as ‘ .0’ in F4.1 format. Thus, a “negative zero” never includes a minus sign.

- In negative numbers output in DOLLAR format, the dollar sign follows the

negative sign. Thus, -9.99 in DOLLAR6.2 format is output as

-$9.99. - In scientific notation, the exponent is output as ‘E’ followed by ‘+’ or ‘-’ and exactly three digits. Numbers with magnitude less than 10**-999 or larger than 10**999 are not supported by most computers, but if they are supported then their output is considered to overflow the field and they are output as asterisks.

- On most computers, no more than 15 decimal digits are significant in output, even if more are printed. In any case, output precision cannot be any higher than input precision; few data sets are accurate to 15 digits of precision. Unavoidable loss of precision in intermediate calculations may also reduce precision of output.

- Special values such as infinities and “not a number” values are

usually converted to the system-missing value before printing. In a few

circumstances, these values are output directly. In fields of width 3

or greater, special values are output as however many characters

fit from

+Infinityor-Infinityfor infinities, fromNaNfor “not a number,” or fromUnknownfor other values (if any are supported by the system). In fields under 3 columns wide, special values are output as asterisks.

6.7.4.2 Custom Currency Formats