GNU Astronomy Utilities ¶

This book documents version 0.24 of the GNU Astronomy Utilities (Gnuastro). Gnuastro provides various programs and libraries for astronomical data manipulation and analysis.

Copyright © 2015-2025 Free Software Foundation, Inc.

Permission is granted to copy, distribute and/or modify this document under the terms of the GNU Free Documentation License, Version 1.3 or any later version published by the Free Software Foundation; with no Invariant Sections, no Front-Cover Texts, and no Back-Cover Texts. A copy of the license is included in the section entitled “GNU Free Documentation License”.

To navigate easily in this web page, you can use the Next, Previous, Up and Contents links in the top and bottom of each page.

Next and Previous will take you to the next or previous topic in the same level, for example, from chapter 1 to chapter 2 or vice versa.

To go to the sections or subsections, you have to click on the menu entries that are there when ever a sub-component to a title is present.

Short Table of Contents

- 1 Introduction

- 2 Tutorials

- 3 Installation

- 4 Common program behavior

- 5 Data containers

- 6 Data manipulation

- 7 Data analysis

- 8 Data modeling

- 9 High-level calculations

- 10 Installed scripts

- 11 Makefile extensions (for GNU Make)

- 12 Library

- 13 Developing

- Appendix A Other useful software

- Appendix B GNU Free Doc. License

- Appendix C GNU Gen. Pub. License v3

- Index: Macros, structures and functions

- Index

Table of Contents

- 1 Introduction

- 1.1 Quick start

- 1.2 Gnuastro programs list

- 1.3 Gnuastro manifesto: Science and its tools

- 1.4 Your rights

- 1.5 Logo of Gnuastro

- 1.6 Naming convention

- 1.7 Version numbering

- 1.8 New to GNU/Linux?

- 1.9 Report a bug

- 1.10 Suggest new feature

- 1.11 Announcements

- 1.12 Conventions

- 1.13 Acknowledgments and short history

- 2 Tutorials

- 2.1 General program usage tutorial

- 2.1.1 Calling Gnuastro’s programs

- 2.1.2 Accessing documentation

- 2.1.3 Setup and data download

- 2.1.4 Dataset inspection and cropping

- 2.1.5 Angular coverage on the sky

- 2.1.6 Cosmological coverage and visualizing tables

- 2.1.7 Building custom programs with the library

- 2.1.8 Option management and configuration files

- 2.1.9 Warping to a new pixel grid

- 2.1.10 NoiseChisel and Multi-Extension FITS files

- 2.1.11 NoiseChisel optimization for detection

- 2.1.12 NoiseChisel optimization for storage

- 2.1.13 Segmentation and making a catalog

- 2.1.14 Measuring the dataset limits

- 2.1.15 Working with catalogs (estimating colors)

- 2.1.16 Column statistics (color-magnitude diagram)

- 2.1.17 Aperture photometry

- 2.1.18 Matching catalogs

- 2.1.19 Reddest clumps, cutouts and parallelization

- 2.1.20 FITS images in a publication

- 2.1.21 Marking objects for publication

- 2.1.22 Writing scripts to automate the steps

- 2.1.23 Citing Gnuastro

- 2.2 Detecting large extended targets

- 2.3 Building the extended PSF

- 2.4 Sufi simulates a detection

- 2.5 Detecting lines and extracting spectra in 3D data

- 2.5.1 Viewing spectra and redshifted lines

- 2.5.2 Sky lines in optical IFUs

- 2.5.3 Continuum subtraction

- 2.5.4 3D detection with NoiseChisel

- 2.5.5 3D measurements and spectra

- 2.5.6 Extracting a single spectrum and plotting it

- 2.5.7 Cubes with logarithmic third dimension

- 2.5.8 Synthetic narrow-band images

- 2.6 Color images with full dynamic range

- 2.7 Zero point of an image

- 2.8 Pointing pattern design

- 2.9 Moiré pattern in coadding and its correction

- 2.10 Clipping outliers

- 2.1 General program usage tutorial

- 3 Installation

- 4 Common program behavior

- 4.1 Command-line

- 4.2 Configuration files

- 4.3 Getting help

- 4.4 Multi-threaded operations

- 4.5 Numeric data types

- 4.6 Memory management

- 4.7 Tables

- 4.8 Tessellation

- 4.9 Automatic output

- 4.10 Output FITS files

- 4.11 Numeric locale

- 5 Data containers

- 6 Data manipulation

- 6.1 Crop

- 6.2 Arithmetic

- 6.2.1 Reverse polish notation

- 6.2.2 Integer benefits and pitfalls

- 6.2.3 Noise basics

- 6.2.4 Arithmetic operators

- 6.2.4.1 Basic mathematical operators

- 6.2.4.2 Trigonometric and hyperbolic operators

- 6.2.4.3 Constants

- 6.2.4.4 Coordinate conversion operators

- 6.2.4.5 Unit conversion operators

- 6.2.4.6 Statistical operators

- 6.2.4.7 Coadding operators

- 6.2.4.8 Filtering (smoothing) operators

- 6.2.4.9 Pooling operators

- 6.2.4.10 Interpolation operators

- 6.2.4.11 Dimensionality changing operators

- 6.2.4.12 Conditional operators

- 6.2.4.13 Mathematical morphology operators

- 6.2.4.14 Bitwise operators

- 6.2.4.15 Numerical type conversion operators

- 6.2.4.16 Random number generators

- 6.2.4.17 Coordinate and border operators

- 6.2.4.18 Loading external columns

- 6.2.4.19 Size and position operators

- 6.2.4.20 New operands

- 6.2.4.21 Operand storage in memory or a file

- 6.2.5 Invoking Arithmetic

- 6.3 Convolve

- 6.3.1 Spatial domain convolution

- 6.3.2 Frequency domain and Fourier operations

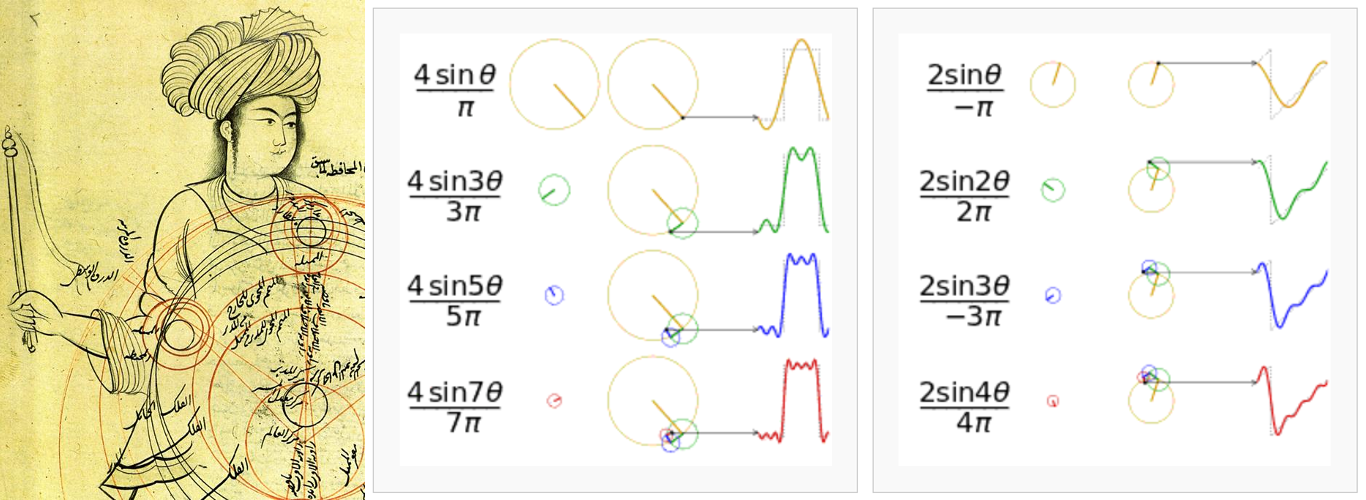

- 6.3.2.1 Fourier series historical background

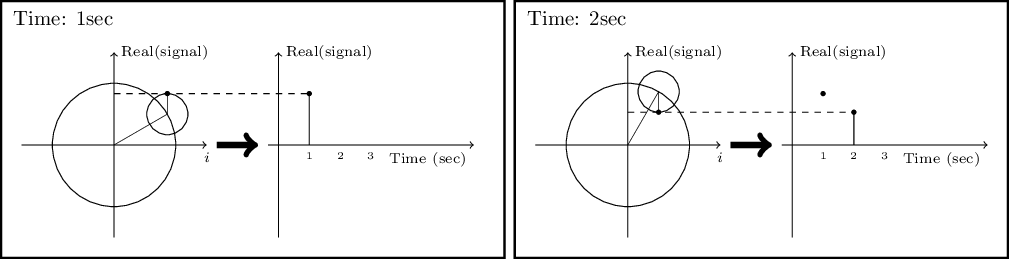

- 6.3.2.2 Circles and the complex plane

- 6.3.2.3 Fourier series

- 6.3.2.4 Fourier transform

- 6.3.2.5 Dirac delta and comb

- 6.3.2.6 Convolution theorem

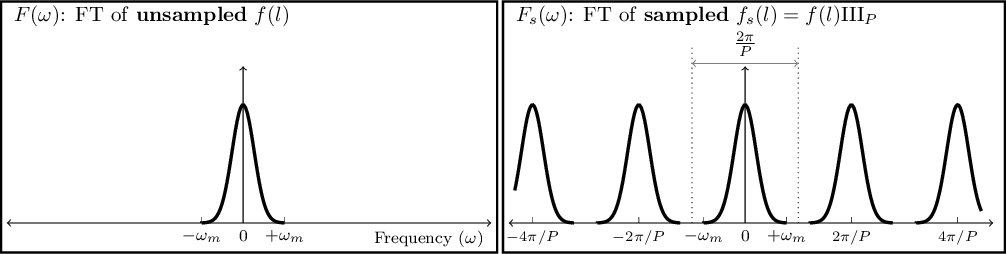

- 6.3.2.7 Sampling theorem

- 6.3.2.8 Discrete Fourier transform

- 6.3.2.9 Fourier operations in two dimensions

- 6.3.2.10 Edges in the frequency domain

- 6.3.3 Spatial vs. Frequency domain

- 6.3.4 Convolution kernel

- 6.3.5 Invoking Convolve

- 6.4 Warp

- 7 Data analysis

- 7.1 Statistics

- 7.2 NoiseChisel

- 7.3 Segment

- 7.4 MakeCatalog

- 7.4.1 Detection and catalog production

- 7.4.2 Brightness, Flux, Magnitude and Surface brightness

- 7.4.3 Standard deviation vs Standard error

- 7.4.4 MakeCatalog measurements on each label

- 7.4.4.1 Identifier columns

- 7.4.4.2 Position measurements in pixels

- 7.4.4.3 Position measurements in WCS

- 7.4.4.4 Brightness measurements

- 7.4.4.5 Surface brightness measurements

- 7.4.4.6 Upper limit measurements

- 7.4.4.7 Morphology measurements (non-parametric)

- 7.4.4.8 Morphology measurements (elliptical)

- 7.4.4.9 Measurements per slice (spectra)

- 7.4.5 Metameasurements on full input

- 7.4.6 Manual metameasurements

- 7.4.7 Adding new columns to MakeCatalog

- 7.4.8 Invoking MakeCatalog

- 7.5 Match

- 8 Data modeling

- 9 High-level calculations

- 10 Installed scripts

- 11 Makefile extensions (for GNU Make)

- 12 Library

- 12.1 Review of library fundamentals

- 12.2 BuildProgram

- 12.3 Gnuastro library

- 12.3.1 Configuration information (config.h)

- 12.3.2 Multithreaded programming (threads.h)

- 12.3.3 Library data types (type.h)

- 12.3.4 Pointers (pointer.h)

- 12.3.5 Library blank values (blank.h)

- 12.3.6 Data container (data.h)

- 12.3.7 Dimensions (dimension.h)

- 12.3.8 Linked lists (list.h)

- 12.3.8.1 List of strings

- 12.3.8.2 List of

int32_t - 12.3.8.3 List of

size_t - 12.3.8.4 List of

float - 12.3.8.5 List of

double - 12.3.8.6 List of

size_tanddouble - 12.3.8.7 List of two

size_ts and adouble - 12.3.8.8 List of

void * - 12.3.8.9 Ordered list of

size_t - 12.3.8.10 Doubly linked ordered list of

size_t - 12.3.8.11 List of

gal_data_t

- 12.3.9 Array input output

- 12.3.10 Table input output (table.h)

- 12.3.11 FITS files (fits.h)

- 12.3.12 File input output

- 12.3.13 World Coordinate System (wcs.h)

- 12.3.14 Arithmetic on datasets (arithmetic.h)

- 12.3.15 Tessellation library (tile.h)

- 12.3.16 Bounding box (box.h)

- 12.3.17 Polygons (polygon.h)

- 12.3.18 Qsort functions (qsort.h)

- 12.3.19 K-d tree (kdtree.h)

- 12.3.20 Permutations (permutation.h)

- 12.3.21 Matching (match.h)

- 12.3.22 Statistical operations (statistics.h)

- 12.3.23 Fitting functions (fit.h)

- 12.3.24 Binary datasets (binary.h)

- 12.3.25 Labeled datasets (label.h)

- 12.3.26 Convolution functions (convolve.h)

- 12.3.27 Pooling functions (pool.h)

- 12.3.28 Interpolation (interpolate.h)

- 12.3.29 Warp library (warp.h)

- 12.3.30 Color functions (color.h)

- 12.3.31 Git wrappers (git.h)

- 12.3.32 Python interface (python.h)

- 12.3.33 Unit conversion library (units.h)

- 12.3.34 Spectral lines library (speclines.h)

- 12.3.35 Cosmology library (cosmology.h)

- 12.3.36 SAO DS9 library (ds9.h)

- 12.4 Library demo programs

- 13 Developing

- 13.1 Why C programming language?

- 13.2 Program design philosophy

- 13.3 Coding conventions

- 13.4 Program source

- 13.5 Documentation

- 13.6 Building and debugging

- 13.7 Test scripts

- 13.8 Bash programmable completion

- 13.9 Developer’s checklist

- 13.10 Gnuastro project webpage

- 13.11 Developing mailing lists

- 13.12 Contributing to Gnuastro

- Appendix A Other useful software

- Appendix B GNU Free Doc. License

- Appendix C GNU Gen. Pub. License v3

- Index: Macros, structures and functions

- Index

1 Introduction ¶

GNU Astronomy Utilities (Gnuastro) is an official GNU package consisting of separate programs and libraries for the manipulation and analysis of astronomical data. All the programs share the same basic command-line user interface for the comfort of both the users and developers. Gnuastro is written to comply fully with the GNU coding standards so it integrates finely with the GNU/Linux operating system. This also enables astronomers to expect a fully familiar experience in the source code, building, installing and command-line user interaction that they have seen in all the other GNU software that they use. The official and always up to date version of this book (or manual) is freely available under GNU Free Doc. License in various formats (PDF, HTML, plain text, info, and as its Texinfo source) at http://www.gnu.org/software/gnuastro/manual/.

For users who are new to the GNU/Linux environment, unless otherwise specified most of the topics in Installation and Common program behavior are common to all GNU software, for example, installation, managing command-line options or getting help (also see New to GNU/Linux?). So if you are new to this empowering environment, we encourage you to go through these chapters carefully. They can be a starting point from which you can continue to learn more from each program’s own manual and fully benefit from and enjoy this wonderful environment. Gnuastro also comes with a large set of libraries, so you can write your own programs using Gnuastro’s building blocks, see Review of library fundamentals for an introduction.

In Gnuastro, no change to any program or library will be committed to its history, before it has been fully documented here first. As discussed in Gnuastro manifesto: Science and its tools this is a founding principle of the Gnuastro.

- Quick start

- Gnuastro programs list

- Gnuastro manifesto: Science and its tools

- Your rights

- Logo of Gnuastro

- Naming convention

- Version numbering

- New to GNU/Linux?

- Report a bug

- Suggest new feature

- Announcements

- Conventions

- Acknowledgments and short history

1.1 Quick start ¶

The latest official release tarball is always available as gnuastro-latest.tar.lz. The Lzip format is used for better compression (smaller output size, thus faster download), and robust archival features and standards. For historical reasons (those users that do not yet have Lzip), the Gzip’d tarball1 is available at the same URL (just change the .lz suffix above to .gz; however, the Lzip’d file is recommended). See Release tarball for more details on the tarball release.

Let’s assume the downloaded tarball is in the TOPGNUASTRO directory.

You can follow the commands below to download and un-compress the Gnuastro source.

You need to have the lzip program for the decompression (see Dependencies from package managers)

If your Tar implementation does not recognize Lzip (the third command fails), run the fourth command.

Note that lines starting with ## do not need to be typed (they are only a description of the following command):

## Go into the download directory. $ cd TOPGNUASTRO ## If you do not already have the tarball, you can download it: $ wget http://ftp.gnu.org/gnu/gnuastro/gnuastro-latest.tar.lz ## If this fails, run the next command. $ tar -xf gnuastro-latest.tar.lz ## Only when the previous command fails. $ lzip -cd gnuastro-latest.tar.lz | tar -xf -

Gnuastro has three mandatory dependencies and some optional dependencies for extra functionality, see Dependencies for the full list.

In Dependencies from package managers we have prepared the command to easily install Gnuastro’s dependencies using the package manager of some operating systems.

When the mandatory dependencies are ready, you can configure, compile, check and install Gnuastro on your system with the following commands.

See Known issues if you confront any complications and if you plan to install without root permissions (such that you will not need sudo in the last command below) see Installation directory.

$ cd gnuastro-X.X # Replace X.X with version number. $ ./configure $ make -j8 # Replace 8 with no. CPU threads. $ make check -j8 # Replace 8 with no. CPU threads. $ sudo make install

For each program there is an ‘Invoke ProgramName’ sub-section in this book which explains how the programs should be run on the command-line (for example, see Invoking Table).

In Tutorials, we have prepared some complete tutorials with common Gnuastro usage scenarios in astronomical research. They even contain links to download the necessary data, and thoroughly describe every step of the process (the science, statistics and optimal usage of the command-line). We therefore recommend to read (an run the commands in) the tutorials before starting to use Gnuastro.

1.2 Gnuastro programs list ¶

One of the most common ways to operate Gnuastro is through its command-line programs. For some tutorials on several real-world usage scenarios, see Tutorials. The list here is just provided as a general summary for those who are new to Gnuastro.

GNU Astronomy Utilities 0.24, contains the following programs. They are sorted in alphabetical order and a short description is provided for each program. The description starts with the executable names in thisfont followed by a pointer to the respective section in parenthesis. Throughout this book, they are ordered based on their context, please see the top-level contents for contextual ordering (based on what they do).

- Arithmetic

(astarithmetic, see Arithmetic) For arithmetic operations on multiple (theoretically unlimited) number of datasets (images). It has a large and growing set of arithmetic, mathematical, and even statistical operators (for example,

+,-,*,/,sqrt,log,min,average,median, see Arithmetic operators).- BuildProgram

(astbuildprog, see BuildProgram) Compile, link and run custom C programs that depend on the Gnuastro library (see Gnuastro library). This program will automatically link with the libraries that Gnuastro depends on, so there is no need to explicitly mention them every time you are compiling a Gnuastro library dependent program.

- ConvertType

(astconvertt, see ConvertType) Convert astronomical data files (FITS or IMH) to and from several other standard image and data formats, for example, TXT, JPEG, EPS or PDF. Optionally, it is also possible to add vector graphics markers over the output image (for example, circles from catalogs containing RA or Dec).

- Convolve

(astconvolve, see Convolve) Convolve (blur or smooth) data with a given kernel in spatial and frequency domain on multiple threads. Convolve can also do deconvolution to find the appropriate kernel to PSF-match two images.

- CosmicCalculator

(astcosmiccal, see CosmicCalculator) Do cosmological calculations, for example, the luminosity distance, distance modulus, comoving volume and many more.

- Crop

(astcrop, see Crop) Crop region(s) from one or many image(s) and stitch several images if necessary. Input coordinates can be in pixel coordinates or world coordinates.

- Fits

(astfits, see Fits) View and manipulate FITS file extensions and header keywords.

- MakeCatalog

(astmkcatalog, see MakeCatalog) Make catalog of labeled image (output of NoiseChisel). The catalogs are highly customizable and adding new calculations/columns is very straightforward, see Akhlaghi 2019.

- MakeProfiles

(astmkprof, see MakeProfiles) Make mock 2D profiles in an image. The central regions of radial profiles are made with a configurable 2D Monte Carlo integration. It can also build the profiles on an over-sampled image.

- Match

(astmatch, see Match) Given two input catalogs, find the rows that match with each other within a given aperture (may be an ellipse).

- NoiseChisel

(astnoisechisel, see NoiseChisel) Detect signal in noise. It uses a technique to detect very faint and diffuse, irregularly shaped signal in noise (galaxies in the sky), using thresholds that are below the Sky value, see Akhlaghi and Ichikawa 2015 and Akhlaghi 2019.

- Query

(astquery, see Query) High-level interface to query pre-defined remote, or external databases, and directly download the required sub-tables on the command-line.

- Segment

(astsegment, see Segment) Segment detected regions based on the structure of signal and the input dataset’s noise properties.

- Statistics

(aststatistics, see Statistics) Statistical calculations on the input dataset (column in a table, image or data cube). This includes man operations such as generating histogram, sigma clipping, and least squares fitting.

- Table

(asttable, Table) Convert FITS binary and ASCII tables into other such tables, print them on the command-line, save them in a plain text file, do arithmetic on the columns or get the FITS table information. For a full list of operations, see Operation precedence in Table.

- Warp

(astwarp, see Warp) Warp image to new pixel grid. By default it will align the pixel and WCS coordinates, removing any non-linear WCS distortions. Any linear warp (projective transformation or Homography) can also be applied to the input images by explicitly calling the respective operation.

The programs listed above are designed to be highly modular and generic.

Hence, they are naturally for lower-level operations.

In Gnuastro, higher-level operations (combining multiple programs, or running a program in a special way), are done with installed Bash scripts (all prefixed with astscript-).

They can be run just like a program and behave very similarly (with minor differences, see Installed scripts).

astscript-ds9-region(See SAO DS9 region files from table) Given a table (either as a file or from standard input), create an SAO DS9 region file from the requested positional columns (WCS or image coordinates).

astscript-fits-view(see Viewing FITS file contents with DS9 or TOPCAT) Given any number of FITS files, this script will either open SAO DS9 (for images or cubes) or TOPCAT (for tables) to view them in a graphic user interface (GUI).

astscript-pointing-simulate(See Pointing pattern simulation) Given a table of pointings on the sky, create and a reference image that contains your camera’s distortions and properties, generate a coadded exposure map. This is very useful in testing the coverage of dither patterns when designing your observing strategy and it is highly customizable. See Akhlaghi 2023, or the dedicated tutorial in Pointing pattern design.

astscript-radial-profile(See Generate radial profile) Calculate the 1D radial profile or 2D polar plot of an object within an image. The object can be at any location in the image, using various measures (median, sigma-clipped mean, etc.), and the radial distance can also be measured on any general ellipse. See Infante-Sainz et al. 2024 for a general description and Eskandarlou & Akhlaghi 2024 for the radial profile component.

astscript-color-faint-gray(see Color images with gray faint regions) Given three images for the Red-Green-Blue (RGB) channels, this script will use the bright pixels for color and will show the faint/diffuse regions in grayscale. This greatly helps in visualizing the full dynamic range of astronomical data. See Infante-Sainz et al. 2024 or a dedicated tutorial in Color images with full dynamic range.

astscript-sort-by-night(See Sort FITS files by night) Given a list of FITS files, and a HDU and keyword name (for a date), this script separates the files in the same night (possibly over two calendar days).

astscript-zeropoint(see Zero point estimation) Estimate the zero point (to calibrate pixel values) of an input image using a reference image or a reference catalog. This is necessary to produce measurements with physical units from new images. See Eskandarlou et al. 2023, or a dedicated tutorial in Zero point of an image.

astscript-psf-*The following scripts are used to estimate the extended PSF estimation and subtraction as described in the tutorial Building the extended PSF:

astscript-psf-select-stars(see Invoking astscript-psf-select-stars) Find all the stars within an image that are suitable for constructing an extended PSF. If the image has WCS, this script can automatically query Gaia to find the good stars.

astscript-psf-stamp(see Invoking astscript-psf-stamp) build a crop (stamp) of a certain width around a star at a certain coordinate in a larger image. This script will do sub-pixel re-positioning to make sure the star is centered and can optionally mask all other background sources).

astscript-psf-scale-factor(see Invoking astscript-psf-scale-factor) Given a PSF model, and the central coordinates of a star in an image, find the scale factor that has to be multiplied by the PSF to scale it to that star.

astscript-psf-unite(see Invoking astscript-psf-unite) Unite the various components of a PSF into one. Because of saturation and non-linearity, to get a good estimate of the extended PSF, it is necessary to construct various parts from different magnitude ranges.

astscript-psf-subtract(see Invoking astscript-psf-subtract) Given the model of a PSF and the central coordinates of a star in the image, do sub-pixel re-positioning of the PSF, scale it to the star and subtract it from the image.

1.3 Gnuastro manifesto: Science and its tools ¶

History of science indicates that there are always inevitably unseen faults, hidden assumptions, simplifications and approximations in all our theoretical models, data acquisition and analysis techniques. It is precisely these that will ultimately allow future generations to advance the existing experimental and theoretical knowledge through their new solutions and corrections.

In the past, scientists would gather data and process them individually to achieve an analysis thus having a much more intricate knowledge of the data and analysis. The theoretical models also required little (if any) simulations to compare with the data. Today both methods are becoming increasingly more dependent on pre-written software. Scientists are dissociating themselves from the intricacies of reducing raw observational data in experimentation or from bringing the theoretical models to life in simulations. These ‘intricacies’ are precisely those unseen faults, hidden assumptions, simplifications and approximations that define scientific progress.

Unfortunately, most persons who have recourse to a computer for statistical analysis of data are not much interested either in computer programming or in statistical method, being primarily concerned with their own proper business. Hence the common use of library programs and various statistical packages. ... It’s time that was changed.

Anscombe’s quartet demonstrates how four data sets with widely different shapes (when plotted) give nearly identical output from standard regression techniques. Anscombe uses this (now famous) quartet, which was introduced in the paper quoted above, to argue that “Good statistical analysis is not a purely routine matter, and generally calls for more than one pass through the computer”. Echoing Anscombe’s concern after 44 years, some of the highly recognized statisticians of our time (Leek, McShane, Gelman, Colquhoun, Nuijten and Goodman), wrote in Nature that:

We need to appreciate that data analysis is not purely computational and algorithmic – it is a human behavior....Researchers who hunt hard enough will turn up a result that fits statistical criteria – but their discovery will probably be a false positive.

Users of statistical (scientific) methods (software) are therefore not passive (objective) agents in their results. It is necessary to actually understand the method, not just use it as a black box. The subjective experience gained by frequently using a method/software is not sufficient to claim an understanding of how the tool/method works and how relevant it is to the data and analysis. This kind of subjective experience is prone to serious misunderstandings about the data, what the software/statistical-method really does (especially as it gets more complicated), and thus the scientific interpretation of the result. This attitude is further encouraged through non-free software2, poorly written (or non-existent) scientific software manuals, and non-reproducible papers3. This approach to scientific software and methods only helps in producing dogmas and an “obscurantist faith in the expert’s special skill, and in his personal knowledge and authority”4.

Program or be programmed. Choose the former, and you gain access to the control panel of civilization. Choose the latter, and it could be the last real choice you get to make.

It is obviously impractical for any one human being to gain the intricate knowledge explained above for every step of an analysis. On the other hand, scientific data can be large and numerous, for example, images produced by telescopes in astronomy. This requires efficient algorithms. To make things worse, natural scientists have generally not been trained in the advanced software techniques, paradigms and architecture that are taught in computer science or engineering courses and thus used in most software. The GNU Astronomy Utilities are an effort to tackle this issue.

Gnuastro is not just a software, this book is as important to the idea behind Gnuastro as the source code (software). This book has tried to learn from the success of the “Numerical Recipes” book in educating those who are not software engineers and computer scientists but still heavy users of computational algorithms, like astronomers. There are two major differences.

The first difference is that Gnuastro’s code and the background information are segregated: the code is moved within the actual Gnuastro software source code and the underlying explanations are given here in this book. In the source code, every non-trivial step is heavily commented and correlated with this book, it follows the same logic of this book, and all the programs follow a similar internal data, function and file structure, see Program source. Complementing the code, this book focuses on thoroughly explaining the concepts behind those codes (history, mathematics, science, software and usage advice when necessary) along with detailed instructions on how to run the programs. At the expense of frustrating “professionals” or “experts”, this book and the comments in the code also intentionally avoid jargon and abbreviations. The source code and this book are thus intimately linked, and when considered as a single entity can be thought of as a real (an actual software accompanying the algorithms) “Numerical Recipes” for astronomy.

The second major, and arguably more important, difference is that “Numerical Recipes” does not allow you to distribute any code that you have learned from it. In other words, it does not allow you to release your software’s source code if you have used their codes, you can only publicly release binaries (a black box) to the community. Therefore, while it empowers the privileged individual who has access to it, it exacerbates social ignorance. Exactly at the opposite end of the spectrum, Gnuastro’s source code is released under the GNU general public license (GPL) and this book is released under the GNU free documentation license. You are therefore free to distribute any software you create using parts of Gnuastro’s source code or text, or figures from this book, see Your rights.

With these principles in mind, Gnuastro’s developers aim to impose the minimum requirements on you (in computer science, engineering and even the mathematics behind the tools) to understand and modify any step of Gnuastro if you feel the need to do so, see Why C programming language? and Program design philosophy.

Without prior familiarity and experience with optics, it is hard to imagine how, Galileo could have come up with the idea of modifying the Dutch military telescope optics to use in astronomy. Astronomical objects could not be seen with the Dutch military design of the telescope. In other words, it is unlikely that Galileo could have asked a random optician to make modifications (not understood by Galileo) to the Dutch design, to do something no astronomer of the time took seriously. In the paradigm of the day, what could be the purpose of enlarging geometric spheres (planets) or points (stars)? In that paradigm only the position and movement of the heavenly bodies was important, and that had already been accurately studied (recently by Tycho Brahe).

In the beginning of his “The Sidereal Messenger” (published in 1610) he cautions the readers on this issue and before describing his results/observations, Galileo instructs us on how to build a suitable instrument. Without a detailed description of how he made his tools and done his observations, no reasonable person would believe his results. Before he actually saw the moons of Jupiter, the mountains on the Moon or the crescent of Venus, Galileo was “evasive”5 to Kepler. Science is defined by its tools/methods, not its raw results6.

The same is true today: science cannot progress with a black box, or poorly released code. The source code of a research is the new (abstractified) communication language in science, understandable by humans and computers. Source code (in any programming language) is a language/notation designed to express all the details that would be too tedious/long/frustrating to report in spoken languages like English, similar to mathematic notation.

An article about computational science [almost all sciences today] ... is not the scholarship itself, it is merely advertising of the scholarship. The Actual Scholarship is the complete software development environment and the complete set of instructions which generated the figures.

Today, the quality of the source code that goes into a scientific result (and the distribution of that code) is as critical to scientific vitality and integrity, as the quality of its written language/English used in publishing/distributing its paper. A scientific paper will not even be reviewed by any respectable journal if its written in a poor language/English. A similar level of quality assessment is thus increasingly becoming necessary regarding the codes/methods used to derive the results of a scientific paper. For more on this, please see Akhlaghi et al. 2021).

Bjarne Stroustrup (creator of the C++ language) says: “Without understanding software, you are reduced to believing in magic”. Ken Thomson (the designer of the Unix operating system) says “I abhor a system designed for the ‘user’ if that word is a coded pejorative meaning ‘stupid and unsophisticated’.” Certainly no scientist (user of a scientific software) would want to be considered a believer in magic, or stupid and unsophisticated.

This can happen when scientists get too distant from the raw data and methods, and are mainly discussing results. In other words, when they feel they have tamed Nature into their own high-level (abstract) models (creations), and are mainly concerned with scaling up, or industrializing those results. Roughly five years before special relativity, and about two decades before quantum mechanics fundamentally changed Physics, Lord Kelvin is quoted as saying:

There is nothing new to be discovered in physics now. All that remains is more and more precise measurement.

A few years earlier Albert. A. Michelson made the following statement:

The more important fundamental laws and facts of physical science have all been discovered, and these are now so firmly established that the possibility of their ever being supplanted in consequence of new discoveries is exceedingly remote.... Our future discoveries must be looked for in the sixth place of decimals.

If scientists are considered to be more than mere puzzle solvers7 (simply adding to the decimals of existing values or observing a feature in 10, 100, or 100000 more galaxies or stars, as Kelvin and Michelson clearly believed), they cannot just passively sit back and uncritically repeat the previous (observational or theoretical) methods/tools on new data. Today there is a wealth of raw telescope images ready (mostly for free) at the finger tips of anyone who is interested with a fast enough internet connection to download them. The only thing lacking is new ways to analyze this data and dig out the treasure that is lying hidden in them to existing methods and techniques.

New data that we insist on analyzing in terms of old ideas (that is, old models which are not questioned) cannot lead us out of the old ideas. However many data we record and analyze, we may just keep repeating the same old errors, missing the same crucially important things that the experiment was competent to find.

1.4 Your rights ¶

The paragraphs below, in this section, belong to the GNU Texinfo8 manual and are not written by us! The name “Texinfo” is just changed to “GNU Astronomy Utilities” or “Gnuastro” because they are released under the same licenses and it is beautifully written to inform you of your rights.

GNU Astronomy Utilities is “free software”; this means that everyone is free to use it and free to redistribute it on certain conditions. Gnuastro is not in the public domain; it is copyrighted and there are restrictions on its distribution, but these restrictions are designed to permit everything that a good cooperating citizen would want to do. What is not allowed is to try to prevent others from further sharing any version of Gnuastro that they might get from you.

Specifically, we want to make sure that you have the right to give away copies of the programs that relate to Gnuastro, that you receive the source code or else can get it if you want it, that you can change these programs or use pieces of them in new free programs, and that you know you can do these things.

To make sure that everyone has such rights, we have to forbid you to deprive anyone else of these rights. For example, if you distribute copies of the Gnuastro related programs, you must give the recipients all the rights that you have. You must make sure that they, too, receive or can get the source code. And you must tell them their rights.

Also, for our own protection, we must make certain that everyone finds out that there is no warranty for the programs that relate to Gnuastro. If these programs are modified by someone else and passed on, we want their recipients to know that what they have is not what we distributed, so that any problems introduced by others will not reflect on our reputation.

The full text of the licenses for the Gnuastro book and software can be respectively found in GNU Gen. Pub. License v39 and GNU Free Doc. License10.

1.5 Logo of Gnuastro ¶

Gnuastro’s logo is an abstract image of a barred spiral galaxy. The galaxy is vertically cut in half: on the left side, the beauty of a contiguous galaxy image is visible. But on the right, the image gets pixelated, and we only see the parts that are within the pixels. The pixels that are more near to the center of the galaxy (which is brighter) are also larger. But as we follow the spiral arms (and get more distant from the center), the pixels get smaller (signifying less signal).

This sharp distinction between the contiguous and pixelated view of the galaxy signifies the main struggle in science: in the “real” world, objects are not pixelated or discrete and have no noise. However, when we observe nature, we are confined and constrained by the resolution of our data collection (CCD imager in this case).

On the other hand, we read English text from the left and progress towards the right. This defines the positioning of the “real” and observed halves of the galaxy: the no-noised and contiguous half (on the left) passes through our observing tools and becomes pixelated and noisy half (on the right). It is the job of scientific software like Gnuastro to help interpret the underlying mechanisms of the “real” universe from the pixelated and noisy data.

Gnuastro’s logo was designed by Marjan Akbari. The concept behind it was created after several design iterations with Mohammad Akhlaghi.

1.6 Naming convention ¶

Gnuastro is a package of independent programs and a collection of libraries, here we are mainly concerned with the programs. Each program has an official name which consists of one or two words, describing what they do. The latter are printed with no space, for example, NoiseChisel or Crop. On the command-line, you can run them with their executable names which start with an ast and might be an abbreviation of the official name, for example, astnoisechisel or astcrop, see Executable names.

We will use “ProgramName” for a generic official program name and astprogname for a generic executable name. In this book, the programs are classified based on what they do and thoroughly explained. An alphabetical list of the programs that are installed on your system with this installation are given in Gnuastro programs list. That list also contains the executable names and version numbers along with a one line description.

1.7 Version numbering ¶

Gnuastro can have two formats of version numbers, for official and unofficial releases.

Official Gnuastro releases are announced on the info-gnuastro mailing list, they have a version control tag in Gnuastro’s development history, and their version numbers are formatted like “A.B”.

A is a major version number, marking a significant planned achievement (for example, see GNU Astronomy Utilities 1.0), while B is a minor version number, see below for more on the distinction.

Note that the numbers are not decimals, so version 2.34 is much more recent than version 2.5, which is not equal to 2.50.

Gnuastro also allows a unique version number for unofficial releases. Unofficial releases can mark any point in Gnuastro’s development history. This is done to allow astronomers to easily use any point in the version controlled history for their data-analysis and research publication. See Version controlled source for a complete introduction. This section is not just for developers and is intended to straightforward and easy to read, so please have a look if you are interested in the cutting-edge. This unofficial version number is a meaningful and easy to read string of characters, unique to that particular point of history. With this feature, users can easily stay up to date with the most recent bug fixes and additions that are committed between official releases.

The unofficial version number is formatted like: A.B.C-D.

A and B are the most recent official version number.

C is the number of commits that have been made after version A.B.

D is the first 4 or 5 characters of the commit hash number11.

Therefore, the unofficial version number ‘3.92.8-29c8’, corresponds to the 8th commit after the official version 3.92 and its commit hash begins with 29c8.

The unofficial version number is sort-able (unlike the raw hash) and as shown above is descriptive of the state of the unofficial release.

Of course an official release is preferred for publication (since its tarballs are easily available and it has gone through more tests, making it more stable), so if an official release is announced prior to your publication’s final review, please consider updating to the official release.

The major version number is set by a major goal which is defined by the developers and user community beforehand, for example, see GNU Astronomy Utilities 1.0. The incremental work done in minor releases are commonly small steps in achieving the major goal. Therefore, there is no limit on the number of minor releases and the difference between the (hypothetical) versions 2.927 and 3.0 can be a small (negligible to the user) improvement that finalizes the defined goals.

1.7.1 GNU Astronomy Utilities 1.0 ¶

Like all software, version 1.0 is a unique milestone: a point where the developers feel it is complete to a minimal level. In Gnuastro, the goal to achieve for version 1.0 is to have all the necessary tools for optical imaging data reduction: starting from raw images of individual exposures to the final deep image ready for high-level science.

While various software did already exist and were commonly used when Gnuastro was first released in 2016. The existing software are mostly written without following any robust, or even common, coding and usage standards or up-to-date and well-maintained documentation. This makes it very hard to reduce astronomical data without learning those software’s peculiarities through trial and error.

1.8 New to GNU/Linux? ¶

Some astronomers initially install and use a GNU/Linux operating system because their necessary tools can only be installed in this environment. However, the transition is not necessarily easy. To encourage you in investing the patience and time to make this transition, and actually enjoy it, we will first start with a basic introduction to GNU/Linux operating systems. Afterwards, in Command-line interface we will discuss the wonderful benefits of the command-line interface, how it beautifully complements the graphic user interface, and why it is worth the (apparently steep) learning curve. Finally a complete chapter (Tutorials) is devoted to real world scenarios of using Gnuastro (on the command-line). Therefore if you do not yet feel comfortable with the command-line we strongly recommend going through that chapter after finishing this section.

You might have already noticed that we are not using the name “Linux”, but “GNU/Linux”. Please take the time to have a look at the following essays and FAQs for a complete understanding of this very important distinction.

- https://gnu.org/philosophy

- https://www.gnu.org/gnu/the-gnu-project.html

- https://www.gnu.org/gnu/gnu-users-never-heard-of-gnu.html

- https://www.gnu.org/gnu/linux-and-gnu.html

- https://www.gnu.org/gnu/why-gnu-linux.html

- https://www.gnu.org/gnu/gnu-linux-faq.html

- Recorded talk: https://peertube.stream/w/ddeSSm33R1eFWKJVqpcthN (first 20 min is about the history of Unix-like operating systems).

In short, the Linux kernel12 is built using the GNU C library (glibc) and GNU compiler collection (gcc). The Linux kernel software alone is just a means for other software to access the hardware resources, it is useless alone! A normal astronomer (or scientist) will never interact with the kernel directly! For example, the command-line environment that you interact with is usually GNU Bash. It is GNU Bash that then talks to kernel.

To better clarify, let’s use this analogy inspired from one of the links above13: saying that you are “running Linux” is like saying you are “driving your engine”. The car’s engine is the main source of power in the car, no one doubts that. But you do not “drive” the engine, you drive the “car”. The engine alone is useless for transportation without the radiator, battery, transmission, wheels, chassis, seats, wind-shield, etc.

To have an operating system, you need lower-level tools (to build the kernel), and higher-level (to use it) software packages. For the Linux kernel, both the lower-level and higher-level tools are GNU. In other words,“the whole system is basically GNU with Linux loaded”.

You can replace the Linux kernel and still have the GNU shell and higher-level utilities. For example, using the “Windows Subsystem for Linux”, you can use almost all GNU tools without the original Linux kernel, but using the host Windows operating system, as in https://ubuntu.com/wsl. Alternatively, you can build a fully functional GNU-based working environment on a macOS or BSD-based operating system (using the host’s kernel and C compiler), for example, through projects like Maneage, see Akhlaghi et al. 2021, in particular Appendix C with all the GNU software tools that is exactly reproducible on a macOS also.

Therefore to acknowledge GNU’s instrumental role in the creation and usage of the Linux kernel and the operating systems that use it, we should call these operating systems “GNU/Linux”.

1.8.1 Command-line interface ¶

One aspect of Gnuastro that might be a little troubling to new GNU/Linux users is that (at least for the time being) it only has a command-line user interface (CLI). This might be contrary to the mostly graphical user interface (GUI) experience with proprietary operating systems. Since the various actions available are not always on the screen, the command-line interface can be complicated, intimidating, and frustrating for a first-time user. This is understandable and also experienced by anyone who started using the computer (from childhood) in a graphical user interface (this includes most of Gnuastro’s authors). Here we hope to convince you of the unique benefits of this interface which can greatly enhance your productivity while complementing your GUI experience.

Through GNOME 314, most GNU/Linux based operating systems now have an advanced and useful GUI. Since the GUI was created long after the command-line, some wrongly consider the command-line to be obsolete. Both interfaces are useful for different tasks. For example, you cannot view an image, video, PDF document or web page on the command-line. On the other hand you cannot reproduce your results easily in the GUI. Therefore they should not be regarded as rivals but as complementary user interfaces, here we will outline how the CLI can be useful in scientific programs.

You can think of the GUI as a veneer over the CLI to facilitate a small subset of all the possible CLI operations. Each click you do on the GUI, can be thought of as internally running a different CLI command. So asymptotically (if a good designer can design a GUI which is able to show you all the possibilities to click on) the GUI is only as powerful as the command-line. In practice, such graphical designers are very hard to find for every program, so the GUI operations are always a subset of the internal CLI commands. For programs that are only made for the GUI, this results in not including lots of potentially useful operations. It also results in ‘interface design’ to be a crucially important part of any GUI program. Scientists do not usually have enough resources to hire a graphical designer, also the complexity of the GUI code is far more than CLI code, which is harmful for a scientific software, see Gnuastro manifesto: Science and its tools.

For programs that have a GUI, one action on the GUI (moving and clicking a mouse, or tapping a touchscreen) might be more efficient and easier than its CLI counterpart (typing the program name and your desired configuration). However, if you have to repeat that same action more than once, the GUI will soon become frustrating and prone to errors. Unless the designers of a particular program decided to design such a system for a particular GUI action, there is no general way to run any possible series of actions automatically on the GUI.

On the command-line, you can run any series of actions which can come from various CLI capable programs you have decided yourself in any possible permutation with one command15. This allows for much more creativity and exact reproducibility that is not possible to a GUI user. For technical and scientific operations, where the same operation (using various programs) has to be done on a large set of data files, this is crucially important. It also allows exact reproducibility which is a foundation principle for scientific results. The most common CLI (which is also known as a shell) in GNU/Linux is GNU Bash, we strongly encourage you to put aside several hours and go through this beautifully explained web page: https://flossmanuals.net/command-line/. You do not need to read or even fully understand the whole thing, only a general knowledge of the first few chapters are enough to get you going.

Since the operations in the GUI are limited and they are visible, reading a manual is not that important in the GUI (most programs do not even have any!). However, to give you the creative power explained above, with a CLI program, it is best if you first read the manual of any program you are using. You do not need to memorize any details, only an understanding of the generalities is needed. Once you start working, there are more easier ways to remember a particular option or operation detail, see Getting help.

To experience the command-line in its full glory and not in the GUI terminal emulator, press the following keys together: CTRL+ALT+F416 to access the virtual console. To return back to your GUI, press the same keys above replacing F4 with F7 (or F1, or F2, depending on your GNU/Linux distribution). In the virtual console, the GUI, with all its distracting colors and information, is gone. Enabling you to focus entirely on your actual work.

For operations that use a lot of your system’s resources (processing a large number of large astronomical images for example), the virtual console is the place to run them. This is because the GUI is not competing with your research work for your system’s RAM and CPU. Since the virtual consoles are completely independent, you can even log out of your GUI environment to give even more of your hardware resources to the programs you are running and thus reduce the operating time.

Since it uses far less system resources, the CLI is also convenient for remote access to your computer. Using secure shell (SSH) you can log in securely to your system (similar to the virtual console) from anywhere even if the connection speeds are low. There are apps for smart phones and tablets which allow you to do this.

1.9 Report a bug ¶

According to Wikipedia “a software bug is an error, flaw, failure, or fault in a computer program or system that causes it to produce an incorrect or unexpected result, or to behave in unintended ways”. So when you see that a program is crashing, not reading your input correctly, giving the wrong results, or not writing your output correctly, you have found a bug. In such cases, it is best if you report the bug to the developers. The programs will also inform you if known impossible situations occur (which are caused by something unexpected) and will ask the users to report the bug issue.

Prior to actually filing a bug report, it is best to search previous reports. The issue might have already been found and even solved. The best place to check if your bug has already been discussed is the bugs tracker on Gnuastro project webpage at https://savannah.gnu.org/bugs/?group=gnuastro. In the top search fields (under “Display Criteria”) set the “Open/Closed” drop-down menu to “Any” and choose the respective program or general category of the bug in “Category” and click the “Apply” button. The results colored green have already been solved and the status of those colored in red is shown in the table.

Recently corrected bugs are probably not yet publicly released because they are scheduled for the next Gnuastro stable release. If the bug is solved but not yet released and it is an urgent issue for you, you can get the version controlled source and compile that, see Version controlled source.

To solve the issue as readily as possible, please follow the following to guidelines in your bug report. The How to Report Bugs Effectively and How To Ask Questions The Smart Way essays also provide some good generic advice for all software (do not contact their authors for Gnuastro’s problems). Mastering the art of giving good bug reports (like asking good questions) can greatly enhance your experience with any free and open source software. So investing the time to read through these essays will greatly reduce your frustration after you see something does not work the way you feel it is supposed to for a large range of software, not just Gnuastro.

- Be descriptive

Please provide as many details as possible and be very descriptive. Explain what you expected and what the output was: it might be that your expectation was wrong. Also please clearly state which sections of the Gnuastro book (this book), or other references you have studied to understand the problem. This can be useful in correcting the book (adding links to likely places where users will check). But more importantly, it will be encouraging for the developers, since you are showing how serious you are about the problem and that you have actually put some thought into it. “To be able to ask a question clearly is two-thirds of the way to getting it answered.” – John Ruskin (1819-1900).

- Individual and independent bug reports

If you have found multiple bugs, please send them as separate (and independent) bugs (as much as possible). This will significantly help us in managing and resolving them sooner.

- Reproducible bug reports ¶

If we cannot exactly reproduce your bug, then it is very hard to resolve it. So please send us a Minimal working example17 along with the description. For example, in running a program, please send us the full command-line text and the output with the -P option, see Operating mode options. If it is caused only for a certain input, also send us that input file. In case the input FITS is large, please use Crop to only crop the problematic section and make it as small as possible so it can easily be uploaded and downloaded and not waste the archive’s storage, see Crop.

There are generally two ways to inform us of bugs:

-

Send a mail to

bug-gnuastro@gnu.org. Any mail you send to this address will be distributed through the bug-gnuastro mailing list18. This is the simplest way to send us bug reports. The developers will then register the bug into the project web page (next choice) for you. -

Use the Gnuastro project web page at https://savannah.gnu.org/projects/gnuastro/: There are two ways to get to the submission page as listed below.

Fill in the form as described below and submit it (see Gnuastro project webpage for more on the project web page).

- Using the top horizontal menu items, immediately under the top page title. Hovering your mouse on “Support” will open a drop-down list. Select “Submit new”. Also if you have an account in Savannah, you can choose “Bugs” in the menu items and then select “Submit new”.

- In the main body of the page, under the “Communication tools” section, click on “Submit new item”.

Once the items have been registered in the mailing list or web page, the developers will add it to either the “Bug Tracker” or “Task Manager” trackers of the Gnuastro project web page. These two trackers can only be edited by the Gnuastro project developers, but they can be browsed by anyone, so you can follow the progress on your bug. You are most welcome to join us in developing Gnuastro and fixing the bug you have found maybe a good starting point. Gnuastro is designed to be easy for anyone to develop (see Gnuastro manifesto: Science and its tools) and there is a full chapter devoted to developing it: Developing.

|

Savannah’s Markup: When posting to Savannah, it helps to have the code displayed in mono-space font and a different background, you may also want to make a list of items or make some words bold. For features like these, you should use Savannah’s “Markup” guide at https://savannah.gnu.org/markup-test.php. You can access this page by clicking on the “Full Markup” link that is just beside the “Preview” button, near the box that you write your comments. As you see there, for example when you want to high-light code, you should put it within a “+verbatim+” and “-verbatim-” environment like below: +verbatim+ astarithmetic image.fits image_arith.fits -h1 isblank nan where -verbatim- Unfortunately, Savannah doesn’t have a way to edit submitted comments. Therefore be sure to press the “Preview” button and check your report’s final format before the final submission. |

1.10 Suggest new feature ¶

We would always be happy to hear of suggested new features. For every program, there are already lists of features that we are planning to add. You can see the current list of plans from the Gnuastro project web page at https://savannah.gnu.org/projects/gnuastro/ and following “Tasks”→“Browse” on the horizontal menu at the top of the page immediately under the title, see Gnuastro project webpage. If you want to request a feature to an existing program, click on the “Display Criteria” above the list and under “Category”, choose that particular program. Under “Category” you can also see the existing suggestions for new programs or other cases like installation, documentation or libraries. Also, be sure to set the “Open/Closed” value to “Any”.

If the feature you want to suggest is not already listed in the task manager, then follow the steps that are fully described in Report a bug. Please have in mind that the developers are all busy with their own astronomical research, and implementing existing “task”s to add or resolve bugs. Gnuastro is a volunteer effort and none of the developers are paid for their hard work. So, although we will try our best, please do not expect for your suggested feature to be immediately included (for the next release of Gnuastro).

The best person to apply the exciting new feature you have in mind is you, since you have the motivation and need. In fact, Gnuastro is designed for making it as easy as possible for you to hack into it (add new features, change existing ones and so on), see Gnuastro manifesto: Science and its tools. Please have a look at the chapter devoted to developing (Developing) and start applying your desired feature. Once you have added it, you can use it for your own work and if you feel you want others to benefit from your work, you can request for it to become part of Gnuastro. You can then join the developers and start maintaining your own part of Gnuastro. If you choose to take this path of action please contact us beforehand (Report a bug) so we can avoid possible duplicate activities and get interested people in contact.

|

Gnuastro is a collection of low level programs: As described in Program design philosophy, a founding principle of Gnuastro is that each library or program should be basic and low-level. High level jobs should be done by running the separate programs or using separate functions in succession through a shell script or calling the libraries by higher level functions, see the examples in Tutorials. So when making the suggestions please consider how your desired job can best be broken into separate steps and modularized. |

1.11 Announcements ¶

Gnuastro has a dedicated mailing list for making announcements (info-gnuastro).

Anyone can subscribe to this mailing list.

Anytime there is a new stable or test release, an email will be circulated there.

The email contains a summary of the overall changes along with a detailed list (from the NEWS file).

This mailing list is thus the best way to stay up to date with new releases, easily learn about the updated/new features, or dependencies (see Dependencies).

To subscribe to this list, please visit https://lists.gnu.org/mailman/listinfo/info-gnuastro. Traffic (number of mails per unit time) in this list is designed to be low: only a handful of mails per year. Previous announcements are available on its archive.

1.12 Conventions ¶

In this book we have the following conventions:

- All commands that are to be run on the shell (command-line) prompt as the user start with a

$. In case they must be run as a superuser or system administrator, they will start with a single#. If the command is in a separate line and next lineis also in the code type face, but does not have any of the$or#signs, then it is the output of the command after it is run. As a user, you do not need to type those lines. A line that starts with##is just a comment for explaining the command to a human reader and must not be typed. - If the command becomes larger than the page width a \ is inserted in the code.

If you are typing the code by hand on the command-line, you do not need to use multiple lines or add the extra space characters, so you can omit them.

If you want to copy and paste these examples (highly discouraged!) then the \ should stay.

The \ character is a shell escape character which is used commonly to make characters which have special meaning for the shell, lose that special meaning (the shell will not treat them especially if there is a \ behind them). When \ is the last visible character in a line (the next character is a new-line character) the new-line character loses its meaning. Therefore, the shell sees it as a simple white-space character not the end of a command! This enables you to use multiple lines to write your commands.

This is not a convention, but a bi-product of the PDF building process of the manual:

In the PDF version of this manual, a single quote (or apostrophe) character in the commands or codes is shown like this: '.

Single quotes are sometimes necessary in combination with commands like awk or sed, or when using Column arithmetic in Gnuastro’s own Table (see Column arithmetic).

Therefore when typing (recommended) or copy-pasting (not recommended) the commands that have a ', please correct it to the single-quote (or apostrophe) character, otherwise the command will fail.

1.13 Acknowledgments and short history ¶

Gnuastro would not have been possible without scholarships and grants from several funding institutions. To acknowledge/cite Gnuastro in your publications, see Citing Gnuastro. Here, you will find Gnuastro’s own acknowledgments to all the grants, institutions and of course people who helped make Gnuastro what it is today.

The Japanese Ministry of Education, Culture, Sports, Science, and Technology (MEXT) scholarships for Mohammad Akhlaghi’s Masters (2010 to 2012) and PhD (2012 to 2015) degrees in Tohoku University Astronomical Institute had an instrumental role in the long term learning and planning that made the idea of Gnuastro possible. The very critical view points of Professor Takashi Ichikawa (Mohammad’s PhD thesis directory) were also instrumental in the initial ideas and foundational philosophy/design of Gnuastro.

Afterwards, the European Research Council (ERC) advanced grant 339659-MUSICOS (Principal investigator, or PI: Roland Bacon) was vital in the growth and expansion of Gnuastro since it supported Mohammad’s first postdoc position from 2016 to 2018. Working with Roland at the Centre de Recherche Astrophysique de Lyon (CRAL, Saint-Genis-Laval, France), enabled a thorough re-write of the core functionality of all libraries and programs (to generalize them for usage on the wonderful MUSE 3D cubes). This maturation phase made it possible for Gnuastro to grow into the large collection of generic programs and libraries it is today. In this period, David Valls-Gabaud (of Paris Observatory) was also instrumental to Gnuastro through sponsoring it in several EU-wide meetings helping to inform the community of this new approach.

In late 2018, Mohammad moved to the Instituto de Astrofisica de Canarias (IAC, Tenerife, Spain) to work with Johan Knapen and Ignacio Trujillo as part of IAC’s project P/300724, financed by the SMSI, through the Canary Islands Department of Economy, Knowledge and Employment. With the support and help of Ignacio and Johan, Gnuastro’s user base significantly grew in this phase. The useful feedback from the many new users resulted in new/improved functionality/features in all the programs.

Since the summer of 2021, work on Gnuastro is continuing primarily in the Centro de Estudios de Física del Cosmos de Aragón (CEFCA), located in Teruel, Spain. Mohammad is a staff researcher at CEFCA and the PI (or collaborator) of grants directly or in-directly (higher-level projects that use Gnuastro) related to the development of Gnuastro.

As of this release, the main grant organizations (and their grants) that have supported Gnuastro (from 2020 with Mohammad as the PI or collaborator) have been:

- Spanish and Aragón governments

In particular the Fondo de Inversiones de Teruel (FITE, or Teruel investment fund) which is a shared funding between the national and Aragonese governments. But also the dedicated Aragonese government research group 16_23R funding to CEFCA.

- Agencia Estatal de Investigación (AEI, or Spanish state research agency)

In particular Grants PID2021-124918NA-C43 (PI: Mohammad from 2022-09 for three years as part of J-PAS19 and J-PLUS20), PID2022-138896NA-C54 (PI: Helena Dominguez Sánchez as part of ARRAKIHS21 from 2023-09 for three years) and PID2024-162229NB-I00 (PI: Mohammad from 2025 for four years; for Gnuastro, Maneage22 to be used in J-PAS, ARRAKIHS and Euclid23) which are all funded by MICIU/AEI/10.13039/501100011033 and “ERDF A way of making Europe”, by “ERDF/EU”.

- Google Summer of Code (GSoC)

We applied for GSoC in the following years and all have been successful so far: 2020, 2021, 2022, 2023, 2024. Special thanks goes to the GNU (for 2020) and OpenAstronomy24 (rest of the years) GSoC organizations also for enabling this.

Rafael Guzmán (the PI of ARRAKIHS) and its high-level management deserve a special mention regarding the two ARRAKIHS-related grants above. Their trust and support for Gnuastro directly lead to Gnuastro becoming to core tool for the ARRAKIHS data reduction pipeline (known as the ARRAKIHS Harvester): it is fully written with Gnuastro and Maneage. Thanks to all the direct support from the ARRAKIHS, J-PAS and J-PLUS, they are now the primary driver for Gnuastro’s development helping us reach the vision GNU Astronomy Utilities 1.0). Being the primary driver does not imply ignoring general user support or bug fixes found by others. As always, we will address bug reports or simple feature addition requests with high priority; it just refers to long-term strategic decisions.

However, Gnuastro is free and open source software (see Your rights) and every contributor has assigned the copyright of all their contributions to the Free software foundation to ensure its freedom in the future (see Copyright assignment). Therefore, all the added components and bugs fixed for the driving projects, can benefit the whole community. We welcome collaborations with any other mission/survey (to focus on their particular needs) if they can provide a dedicated personnel to help in those developments.

The full list of Gnuastro authors is available at the start of this book (on the third page of its PDF) and the AUTHORS file in the source code (both are generated automatically from the version controlled history). The plain text file THANKS (also distributed along with the source code), contains the list of people who contributed indirectly role to Gnuastro: not committing any code in the Gnuastro version controlled history, but providing useful and constructive comments, suggestions and reported bugs. Such contributions have also been critical for Gnuastro’s current state, so we would also like to gratefully acknowledge their role here also (in alphabetical order by family name):

Valentina Abril-melgarejo, Marjan Akbari, Carlos Allende Prieto, Hamed Altafi, Roland Bacon, Roberto Baena Gallé, Zahra Bagheri, Karl Berry, Faezeh Bidjarchian, Leindert Boogaard, Nicolas Bouché, Stefan Brüns, Fernando Buitrago Alonso, Adrian Bunk, Rosa Calvi, Mark Calabretta, Juan Castillo Ramírez, Alejandro Camazón Pinilla, Nushkia Chamba, Sergio Chueca Urzay, Tamara Civera Lorenzo, Benjamin Clement, Nima Dehdilani, Andrés Del Pino Molina, Antonio Diaz Diaz, Paola Dimauro, Alexey Dokuchaev, Pierre-Alain Duc, Alessandro Ederoclite, Elham Eftekhari, Paul Eggert, Sepideh Eskandarlou, Sílvia Farras, Juan Antonio Fernández Ontiveros, Gaspar Galaz, Andrés García-Serra Romero, Zohre Ghaffari, Thérèse Godefroy, Giulia Golini, Craig Gordon, Martin Guerrero Roncel, Madusha Gunawardhana, Rafael Gúzman, Bruno Haible, Stephen Hamer, Siyang He, Zahra Hosseini, Leslie Hunt, Takashi Ichikawa, Raúl Infante Sainz, Brandon Invergo, Oryna Ivashtenko, Aurélien Jarno, Ooldooz Kabood, Lee Kelvin, Brandon Kelly, Mohammad-Reza Khellat, Johan Knapen, Geoffry Krouchi, Martin Kuemmel, Teet Kuutma, Clotilde Laigle, Floriane Leclercq, Alan Lefor, Javier Licandro, Jeremy Lim, Giacomo Lorenzetti, Alejandro Lumbreras Calle, Sebastián Luna Valero, Alberto Madrigal, Guillaume Mahler, Carlos Marrero de La Rosa, Juan Miro, Alireza Molaeinezhad, Javier Moldon, Juan Molina Tobar, Francesco Montanari, Raphael Morales, Carlos Morales Socorro, Sylvain Mottet, Benson Muite, Dmitrii Oparin, François Ochsenbein, Bertrand Pain, Rahna Payyasseri Thanduparackal, William Pence, Irene Pintos Castro, Mamta Pommier, Marcel Popescu, Bob Proulx, Joseph Putko, Samane Raji, Ignacio Ruiz Cejudo, Teymoor Saifollahi, Joanna Sakowska, Elham Saremi, Nafise Sedighi, Markus Schaney, Yahya Sefidbakht, Alejandro Serrano Borlaff, Zahra Sharbaf, David Shupe, Leigh Smith, Elizabeth Sola, Jenny Sorce, Manuel Sánchez-Benavente, Lee Spitler, Richard Stallman, Michael Stein, Ole Streicher, Alfred M. Szmidt, Michel Tallon, Juan C. Tello, Vincenzo Testa, Peter Teuben, Éric Thiébaut, Ignacio Trujillo, Hok Kan (Ronald) Tsang, Mathias Urbano, David Valls-Gabaud, Jesús Varela, Jesús Vega, Aaron Watkins, Richard Wilbur, Phil Wyett, Dennis Williamson, Michael H.F. Wilkinson, Christopher Willmer, Greg Wooledge, Xiuqin Wu, Sara Yousefi Taemeh, Johannes Zabl. The GNU French Translation Team is also managing the French version of the top Gnuastro web page which we highly appreciate. Finally, we should thank all the (sometimes anonymous) people in various online forums who patiently answered all our small (but important) technical questions.

2 Tutorials ¶

To help new users have a smooth and easy start with Gnuastro, in this chapter several thoroughly elaborated tutorials, or cookbooks, are provided. These tutorials demonstrate the capabilities of different Gnuastro programs and libraries, along with tips and guidelines for the best practices of using them in various realistic situations.

We strongly recommend going through these tutorials to get a good feeling of how the programs are related (built in a modular design to be used together in a pipeline), very similar to the core Unix-based programs that they were modeled on. Therefore these tutorials will help in optimally using Gnuastro’s programs (and generally, the Unix-like command-line environment) effectively for your research.

The first three tutorials (General program usage tutorial and Detecting large extended targets and Building the extended PSF) use real input datasets from some of the deep Hubble Space Telescope (HST) images, the Sloan Digital Sky Survey (SDSS) and the Javalambre Photometric Local Universe Survey (J-PLUS) respectively. Their aim is to demonstrate some real-world problems that many astronomers often face and how they can be solved with Gnuastro’s programs. The fourth tutorial (Sufi simulates a detection) focuses on simulating astronomical images, which is another critical aspect of any analysis!

The ultimate aim of General program usage tutorial is to detect galaxies in a deep HST image, measure their positions, magnitude and select those with the strongest colors. In the process, it takes many detours to introduce you to the useful capabilities of many of the programs. So please be patient in reading it. If you do not have much time and can only try one of the tutorials, we recommend this one.

Detecting large extended targets deals with a major problem in astronomy: effectively detecting the faint outer wings of bright (and large) nearby galaxies to extremely low surface brightness levels (roughly one quarter of the local noise level in the example discussed). Besides the interesting scientific questions in these low-surface brightness features, failure to properly detect them will bias the measurements of the background objects and the survey’s noise estimates. This is an important issue, especially in wide surveys. Because bright/large galaxies and stars25, cover a significant fraction of the survey area.

Building the extended PSF tackles an important problem in astronomy: how the extract the PSF of an image, to the largest possible extent, without assuming any functional form. In Gnuastro we have multiple installed scripts for this job. Their usage and logic behind best tuning them for the particular step, is fully described in this tutorial, on a real dataset. The tutorial concludes with subtracting that extended PSF from the science image; thus giving you a cleaner image (with no scattered light of the brighter stars) for your higher-level analysis.

Sufi simulates a detection has a fictional26 setting! Showing how Abd al-rahman Sufi (903 – 986 A.D., the first recorded description of “nebulous” objects in the heavens is attributed to him) could have used some of Gnuastro’s programs for a realistic simulation of his observations and see if his detection of nebulous objects was trust-able. Because all conditions are under control in a simulated/mock environment/dataset, mock datasets can be a valuable tool to inspect the limitations of your data analysis and processing. But they need to be as realistic as possible, so this tutorial is dedicated to this important step of an analysis (simulations).

There are other tutorials also, on things that are commonly necessary in astronomical research: In Detecting lines and extracting spectra in 3D data, we use MUSE cubes (an IFU dataset) to show how you can subtract the continuum, detect emission-line features, extract spectra and build synthetic narrow-band images. In Color channels in same pixel grid we demonstrate how you can warp multiple images into a single pixel grid (often necessary with multi-wavelength data), and build a single color image. In Moiré pattern in coadding and its correction we show how you can avoid the unwanted Moiré pattern which happens when warping separate exposures to build a coadded deeper image. In Zero point of an image we review the process of estimating the zero point of an image using a reference image or catalog. Finally, in Pointing pattern design we show the process by which you can simulate a dither pattern to find the best observing strategy for your next exciting scientific project.

In these tutorials, we have intentionally avoided too many cross references to make it more easy to read. For more information about a particular program, you can visit the section with the same name as the program in this book. Each program section in the subsequent chapters starts by explaining the general concepts behind what it does, for example, see Convolve. If you only want practical information on running a program, for example, its options/configuration, input(s) and output(s), please consult the subsection titled “Invoking ProgramName”, for example, see Invoking NoiseChisel. For an explanation of the conventions we use in the example codes through the book, please see Conventions.

- General program usage tutorial

- Detecting large extended targets

- Building the extended PSF

- Sufi simulates a detection

- Detecting lines and extracting spectra in 3D data

- Color images with full dynamic range

- Zero point of an image

- Pointing pattern design

- Moiré pattern in coadding and its correction

- Clipping outliers

2.1 General program usage tutorial ¶

Measuring colors of astronomical objects in broad-band or narrow-band images is one of the most basic and common steps in astronomical analysis. Here, we will use Gnuastro’s programs to get a physical scale (area at certain redshifts) of the field we are studying, detect objects in a Hubble Space Telescope (HST) image, measure their colors and identify the ones with the strongest colors, do a visual inspection of these objects and inspect spatial position in the image. After this tutorial, you can also try the Detecting large extended targets tutorial which goes into a little more detail on detecting very low surface brightness signal.

During the tutorial, we will take many detours to explain, and practically demonstrate, the many capabilities of Gnuastro’s programs. In the end you will see that the things you learned during this tutorial are much more generic than this particular problem and can be used in solving a wide variety of problems involving the analysis of data (images or tables). So please do not rush, and go through the steps patiently to optimally master Gnuastro.

In this tutorial, we will use the HSTeXtreme Deep Field dataset. Like almost all astronomical surveys, this dataset is free for download and usable by the public. You will need the following tools in this tutorial: Gnuastro, SAO DS9 27, GNU Wget28, and AWK (most common implementation is GNU AWK29).