Linear Algebra¶

This chapter describes functions for solving linear systems. The

library provides linear algebra operations which operate directly on

the gsl_vector and gsl_matrix objects. These routines

use the standard algorithms from Golub & Van Loan’s Matrix

Computations with Level-1 and Level-2 BLAS calls for efficiency.

The functions described in this chapter are declared in the header file

gsl_linalg.h.

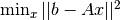

LU Decomposition¶

A general  -by-

-by- matrix

matrix  has an

has an  decomposition

decomposition

where  is an

is an  -by-

-by- permutation matrix,

permutation matrix,  is

is

-by-

-by- and

and  is

is  -by-

-by- .

For square matrices,

.

For square matrices,  is a lower unit triangular matrix and

is a lower unit triangular matrix and

is upper triangular. For

is upper triangular. For  ,

,  is a unit lower

trapezoidal matrix of size

is a unit lower

trapezoidal matrix of size  -by-

-by- . For

. For  ,

,

is upper trapezoidal of size

is upper trapezoidal of size  -by-

-by- .

For square matrices this decomposition can be used to convert the linear system

.

For square matrices this decomposition can be used to convert the linear system

into a pair of triangular systems (

into a pair of triangular systems ( ,

,

), which can be solved by forward and back-substitution.

Note that the

), which can be solved by forward and back-substitution.

Note that the  decomposition is valid for singular matrices.

decomposition is valid for singular matrices.

-

int gsl_linalg_LU_decomp(gsl_matrix *A, gsl_permutation *p, int *signum)¶

-

int gsl_linalg_complex_LU_decomp(gsl_matrix_complex *A, gsl_permutation *p, int *signum)¶

These functions factorize the matrix

Ainto the decomposition

decomposition  . On output the diagonal and upper

triangular (or trapezoidal) part of the input matrix

. On output the diagonal and upper

triangular (or trapezoidal) part of the input matrix Acontain the matrix . The lower triangular (or trapezoidal) part of the input matrix (excluding the

diagonal) contains

. The lower triangular (or trapezoidal) part of the input matrix (excluding the

diagonal) contains  . The diagonal elements of

. The diagonal elements of  are

unity, and are not stored.

are

unity, and are not stored.The permutation matrix

is encoded in the permutation

is encoded in the permutation

pon output. The -th column of the matrix

-th column of the matrix  is given by the

is given by the  -th column of the identity matrix, where

-th column of the identity matrix, where

the

the

-th element of the permutation vector. The sign of the

permutation is given by

-th element of the permutation vector. The sign of the

permutation is given by signum. It has the value ,

where

,

where  is the number of interchanges in the permutation.

is the number of interchanges in the permutation.The algorithm used in the decomposition is Gaussian Elimination with partial pivoting (Golub & Van Loan, Matrix Computations, Algorithm 3.4.1), combined with a recursive algorithm based on Level 3 BLAS (Peise and Bientinesi, 2016).

The functions return

GSL_SUCCESSfor non-singular matrices. If the matrix is singular, the factorization is still completed, and the functions return an integer![k \in [1, MIN(M,N)]](_images/math/809a229b5eb72981d10da825eaafbabbacff51c8.png) such that

such that

. In this case,

. In this case,  is singular and the

decomposition should not be used to solve linear systems.

is singular and the

decomposition should not be used to solve linear systems.

-

int gsl_linalg_LU_solve(const gsl_matrix *LU, const gsl_permutation *p, const gsl_vector *b, gsl_vector *x)¶

-

int gsl_linalg_complex_LU_solve(const gsl_matrix_complex *LU, const gsl_permutation *p, const gsl_vector_complex *b, gsl_vector_complex *x)¶

These functions solve the square system

using the

using the  decomposition of

decomposition of  into (

into (LU,p) given bygsl_linalg_LU_decomp()orgsl_linalg_complex_LU_decomp()as input.

-

int gsl_linalg_LU_svx(const gsl_matrix *LU, const gsl_permutation *p, gsl_vector *x)¶

-

int gsl_linalg_complex_LU_svx(const gsl_matrix_complex *LU, const gsl_permutation *p, gsl_vector_complex *x)¶

These functions solve the square system

in-place using the

precomputed

in-place using the

precomputed  decomposition of

decomposition of  into (

into (LU,p). On inputxshould contain the right-hand side , which is replaced

by the solution on output.

, which is replaced

by the solution on output.

-

int gsl_linalg_LU_refine(const gsl_matrix *A, const gsl_matrix *LU, const gsl_permutation *p, const gsl_vector *b, gsl_vector *x, gsl_vector *work)¶

-

int gsl_linalg_complex_LU_refine(const gsl_matrix_complex *A, const gsl_matrix_complex *LU, const gsl_permutation *p, const gsl_vector_complex *b, gsl_vector_complex *x, gsl_vector_complex *work)¶

These functions apply an iterative improvement to

x, the solution of , from the precomputed

, from the precomputed  decomposition of

decomposition of  into

(

into

(LU,p). Additional workspace of lengthNis required inwork.

-

int gsl_linalg_LU_invert(const gsl_matrix *LU, const gsl_permutation *p, gsl_matrix *inverse)¶

-

int gsl_linalg_complex_LU_invert(const gsl_matrix_complex *LU, const gsl_permutation *p, gsl_matrix_complex *inverse)¶

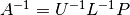

These functions compute the inverse of a matrix

from its

from its

decomposition (

decomposition (LU,p), storing the result in the matrixinverse. The inverse is computed by computing the inverses ,

,  and finally forming the product

and finally forming the product

. Each step is based on Level 3 BLAS calls.

. Each step is based on Level 3 BLAS calls.It is preferable to avoid direct use of the inverse whenever possible, as the linear solver functions can obtain the same result more efficiently and reliably (consult any introductory textbook on numerical linear algebra for details).

-

int gsl_linalg_LU_invx(gsl_matrix *LU, const gsl_permutation *p)¶

-

int gsl_linalg_complex_LU_invx(gsl_matrix_complex *LU, const gsl_permutation *p)¶

These functions compute the inverse of a matrix

from its

from its

decomposition (

decomposition (LU,p), storing the result in-place in the matrixLU. The inverse is computed by computing the inverses ,

,  and finally forming the product

and finally forming the product

. Each step is based on Level 3 BLAS calls.

. Each step is based on Level 3 BLAS calls.It is preferable to avoid direct use of the inverse whenever possible, as the linear solver functions can obtain the same result more efficiently and reliably (consult any introductory textbook on numerical linear algebra for details).

-

double gsl_linalg_LU_det(gsl_matrix *LU, int signum)¶

-

gsl_complex gsl_linalg_complex_LU_det(gsl_matrix_complex *LU, int signum)¶

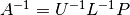

These functions compute the determinant of a matrix

from its

from its

decomposition,

decomposition, LU. The determinant is computed as the product of the diagonal elements of and the sign of the row

permutation

and the sign of the row

permutation signum.

-

double gsl_linalg_LU_lndet(gsl_matrix *LU)¶

-

double gsl_linalg_complex_LU_lndet(gsl_matrix_complex *LU)¶

These functions compute the logarithm of the absolute value of the determinant of a matrix

,

,  , from its

, from its  decomposition,

decomposition, LU. This function may be useful if the direct computation of the determinant would overflow or underflow.

-

int gsl_linalg_LU_sgndet(gsl_matrix *LU, int signum)¶

-

gsl_complex gsl_linalg_complex_LU_sgndet(gsl_matrix_complex *LU, int signum)¶

These functions compute the sign or phase factor of the determinant of a matrix

,

,  , from its

, from its  decomposition,

decomposition,

LU.

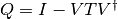

QR Decomposition¶

A general rectangular  -by-

-by- matrix

matrix  has a

has a

decomposition into the product of a unitary

decomposition into the product of a unitary

-by-

-by- square matrix

square matrix  (where

(where  ) and

an

) and

an  -by-

-by- right-triangular matrix

right-triangular matrix  ,

,

This decomposition can be used to convert the square linear system  into the triangular system

into the triangular system  , which can be solved by

back-substitution. Another use of the

, which can be solved by

back-substitution. Another use of the  decomposition is to

compute an orthonormal basis for a set of vectors. The first

decomposition is to

compute an orthonormal basis for a set of vectors. The first  columns of

columns of  form an orthonormal basis for the range of

form an orthonormal basis for the range of  ,

,

, when

, when  has full column rank.

has full column rank.

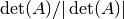

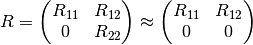

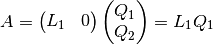

When  , the bottom

, the bottom  rows of

rows of  are zero,

and so

are zero,

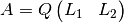

and so  can be naturally partioned as

can be naturally partioned as

where  is

is  -by-

-by- upper triangular,

upper triangular,  is

is  -by-

-by- , and

, and

is

is  -by-

-by- .

.  is sometimes called the thin or reduced

QR decomposition. The solution of the least squares problem

is sometimes called the thin or reduced

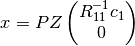

QR decomposition. The solution of the least squares problem  when

when  has full rank is:

has full rank is:

where  is the first

is the first  elements of

elements of  . If

. If  is rank deficient,

see QR Decomposition with Column Pivoting and Complete Orthogonal Decomposition.

is rank deficient,

see QR Decomposition with Column Pivoting and Complete Orthogonal Decomposition.

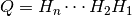

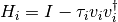

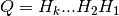

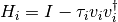

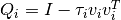

GSL offers two interfaces for the  decomposition. The first proceeds by zeroing

out columns below the diagonal of

decomposition. The first proceeds by zeroing

out columns below the diagonal of  , one column at a time using Householder transforms.

In this method, the factor

, one column at a time using Householder transforms.

In this method, the factor  is represented as a product of Householder reflectors:

is represented as a product of Householder reflectors:

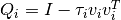

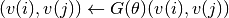

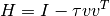

where each  for a scalar

for a scalar  and column vector

and column vector

. In this method, functions which compute the full matrix

. In this method, functions which compute the full matrix  or apply

or apply

to a right hand side vector operate by applying the Householder matrices one

at a time using Level 2 BLAS.

to a right hand side vector operate by applying the Householder matrices one

at a time using Level 2 BLAS.

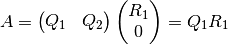

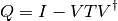

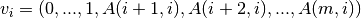

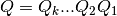

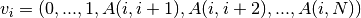

The second interface is based on a Level 3 BLAS block recursive algorithm developed by

Elmroth and Gustavson. In this case,  is written in block form as

is written in block form as

where  is an

is an  -by-

-by- matrix of the column vectors

matrix of the column vectors  and

and  is an

is an  -by-

-by- upper triangular matrix, whose diagonal elements

are the

upper triangular matrix, whose diagonal elements

are the  . Computing the full

. Computing the full  , while requiring more flops than

the Level 2 approach, offers the advantage that all standard operations can take advantage

of cache efficient Level 3 BLAS operations, and so this method often performs faster

than the Level 2 approach. The functions for the recursive block algorithm have a

, while requiring more flops than

the Level 2 approach, offers the advantage that all standard operations can take advantage

of cache efficient Level 3 BLAS operations, and so this method often performs faster

than the Level 2 approach. The functions for the recursive block algorithm have a

_r suffix, and it is recommended to use this interface for performance

critical applications.

-

int gsl_linalg_QR_decomp_r(gsl_matrix *A, gsl_matrix *T)¶

-

int gsl_linalg_complex_QR_decomp_r(gsl_matrix_complex *A, gsl_matrix_complex *T)¶

These functions factor the

-by-

-by- matrix

matrix Ainto the decomposition

decomposition  using the recursive Level 3 BLAS

algorithm of Elmroth and Gustavson. On output the diagonal and

upper triangular part of

using the recursive Level 3 BLAS

algorithm of Elmroth and Gustavson. On output the diagonal and

upper triangular part of Acontain the matrix . The

. The  -by-

-by- matrix

matrix Tstores the upper triangular factor appearing in .

The matrix

.

The matrix  is given by

is given by  , where the elements

below the diagonal of

, where the elements

below the diagonal of Acontain the columns of on output.

on output.This algorithm requires

and performs best for

“tall-skinny” matrices, i.e.

and performs best for

“tall-skinny” matrices, i.e.  .

.

-

int gsl_linalg_QR_solve_r(const gsl_matrix *QR, const gsl_matrix *T, const gsl_vector *b, gsl_vector *x)¶

-

int gsl_linalg_complex_QR_solve_r(const gsl_matrix_complex *QR, const gsl_matrix_complex *T, const gsl_vector_complex *b, gsl_vector_complex *x)¶

These functions solve the square system

using the

using the  decomposition of

decomposition of  held in (

held in (QR,T). The least-squares solution for rectangular systems can be found usinggsl_linalg_QR_lssolve_r()orgsl_linalg_complex_QR_lssolve_r().

-

int gsl_linalg_QR_lssolve_r(const gsl_matrix *QR, const gsl_matrix *T, const gsl_vector *b, gsl_vector *x, gsl_vector *work)¶

-

int gsl_linalg_complex_QR_lssolve_r(const gsl_matrix_complex *QR, const gsl_matrix_complex *T, const gsl_vector_complex *b, gsl_vector_complex *x, gsl_vector_complex *work)¶

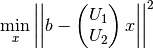

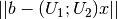

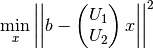

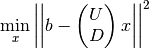

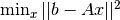

These functions find the least squares solution to the overdetermined system

, where the matrix

, where the matrix Ahas more rows than columns. The least squares solution minimizes the Euclidean norm of the residual, . The routine requires as input

the

. The routine requires as input

the  decomposition of

decomposition of  into (

into (QR,T) given bygsl_linalg_QR_decomp_r()orgsl_linalg_complex_QR_decomp_r().The parameter

xis of length .

The solution

.

The solution  is returned in the first

is returned in the first  rows of

rows of x, i.e.

x[0], x[1], ..., x[N-1]. The last rows

of

rows

of xcontain a vector whose norm is equal to the residual norm . This similar to the behavior of LAPACK DGELS.

Additional workspace of length

. This similar to the behavior of LAPACK DGELS.

Additional workspace of length  is required in

is required in work.

-

int gsl_linalg_QR_lssolvem_r(const gsl_matrix *QR, const gsl_matrix *T, const gsl_matrix *B, gsl_matrix *X, gsl_matrix *work)¶

-

int gsl_linalg_complex_QR_lssolvem_r(const gsl_matrix_complex *QR, const gsl_matrix_complex *T, const gsl_matrix_complex *B, gsl_matrix_complex *X, gsl_matrix_complex *work)¶

These functions find the least squares solutions to the overdetermined systems

where the matrix

where the matrix Ahas more rows than columns. The least squares solution minimizes the Euclidean norm of the residual, . The routine requires as input

the

. The routine requires as input

the  decomposition of

decomposition of  into (

into (QR,T) given bygsl_linalg_QR_decomp_r()orgsl_linalg_complex_QR_decomp_r(). The right hand side is provided in column

is provided in column  of the input

of the input B, while the solution is stored in the

first

is stored in the

first  rows of column

rows of column  of the output

of the output X.The parameters

XandBare of size -by-

-by- .

The last

.

The last  rows of

rows of Xcontain vectors whose norm is equal to the residual norm . This similar to the behavior of LAPACK DGELS.

Additional workspace of length

. This similar to the behavior of LAPACK DGELS.

Additional workspace of length  -by-

-by- is required in

is required in work.

-

int gsl_linalg_QR_QTvec_r(const gsl_matrix *QR, const gsl_matrix *T, gsl_vector *v, gsl_vector *work)¶

-

int gsl_linalg_complex_QR_QHvec_r(const gsl_matrix_complex *QR, const gsl_matrix_complex *T, gsl_vector_complex *v, gsl_vector_complex *work)¶

These functions apply the matrix

(or

(or  ) encoded in the decomposition

(

) encoded in the decomposition

(QR,T) to the vectorv, storing the result (or

(or  ) in

) in v. The matrix multiplication is carried out directly using the encoding of the Householder vectors without needing to form the full matrix . Additional workspace of size

. Additional workspace of size  is required in

is required in work.

-

int gsl_linalg_QR_QTmat_r(const gsl_matrix *QR, const gsl_matrix *T, gsl_matrix *B, gsl_matrix *work)¶

-

int gsl_linalg_complex_QR_QHmat_r(const gsl_matrix_complex *QR, const gsl_matrix_complex *T, gsl_matrix_complex *B, gsl_matrix_complex *work)¶

This function applies the matrix

(or

(or  ) encoded in the decomposition

(

) encoded in the decomposition

(QR,T) to the -by-

-by- matrix

matrix B, storing the result (or

(or  ) in

) in B. The matrix multiplication is carried out directly using the encoding of the Householder vectors without needing to form the full matrix . Additional workspace of size

. Additional workspace of size  -by-

-by- is required in

is required in work.

-

int gsl_linalg_QR_unpack_r(const gsl_matrix *QR, const gsl_matrix *T, gsl_matrix *Q, gsl_matrix *R)¶

-

int gsl_linalg_complex_QR_unpack_r(const gsl_matrix_complex *QR, const gsl_matrix_complex *T, gsl_matrix_complex *Q, gsl_matrix_complex *R)¶

These functions unpack the encoded

decomposition

(

decomposition

(QR,T) as output fromgsl_linalg_QR_decomp_r()orgsl_linalg_complex_QR_decomp_r()into the matricesQandR, whereQis -by-

-by- and

and Ris -by-

-by- .

Note that the full

.

Note that the full  matrix is

matrix is  -by-

-by- , however the lower trapezoidal portion

is zero, so only the upper triangular factor is stored.

, however the lower trapezoidal portion

is zero, so only the upper triangular factor is stored.

-

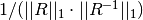

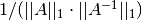

int gsl_linalg_QR_rcond(const gsl_matrix *QR, double *rcond, gsl_vector *work)¶

This function estimates the reciprocal condition number (using the 1-norm) of the

factor,

stored in the upper triangle of

factor,

stored in the upper triangle of QR. The reciprocal condition number estimate, defined as , is stored in

, is stored in rcond. Additional workspace of size is required in

is required in work.

Level 2 Interface¶

The functions below are for the slower Level 2 interface to the QR decomposition. It is

recommended to use these functions only for  , since the Level 3 interface

above performs much faster for

, since the Level 3 interface

above performs much faster for  .

.

-

int gsl_linalg_QR_decomp(gsl_matrix *A, gsl_vector *tau)¶

-

int gsl_linalg_complex_QR_decomp(gsl_matrix_complex *A, gsl_vector_complex *tau)¶

These functions factor the

-by-

-by- matrix

matrix Ainto the decomposition

decomposition  . On output the diagonal and

upper triangular part of the input matrix contain the matrix

. On output the diagonal and

upper triangular part of the input matrix contain the matrix

. The vector

. The vector tauand the columns of the lower triangular part of the matrixAcontain the Householder coefficients and Householder vectors which encode the orthogonal matrixQ. The vectortaumust be of length . The matrix

. The matrix

is related to these components by the product of

is related to these components by the product of

reflector matrices,

reflector matrices,  where

where  and

and  is the

Householder vector

is the

Householder vector  .

This is the same storage scheme as used by LAPACK.

.

This is the same storage scheme as used by LAPACK.The algorithm used to perform the decomposition is Householder QR (Golub & Van Loan, “Matrix Computations”, Algorithm 5.2.1).

-

int gsl_linalg_QR_solve(const gsl_matrix *QR, const gsl_vector *tau, const gsl_vector *b, gsl_vector *x)¶

-

int gsl_linalg_complex_QR_solve(const gsl_matrix_complex *QR, const gsl_vector_complex *tau, const gsl_vector_complex *b, gsl_vector_complex *x)¶

These functions solve the square system

using the

using the  decomposition of

decomposition of  held in (

held in (QR,tau). The least-squares solution for rectangular systems can be found usinggsl_linalg_QR_lssolve().

-

int gsl_linalg_QR_svx(const gsl_matrix *QR, const gsl_vector *tau, gsl_vector *x)¶

-

int gsl_linalg_complex_QR_svx(const gsl_matrix_complex *QR, const gsl_vector_complex *tau, gsl_vector_complex *x)¶

These functions solve the square system

in-place using

the

in-place using

the  decomposition of

decomposition of  held in (

held in (QR,tau). On inputxshould contain the right-hand side , which is replaced by the solution on output.

, which is replaced by the solution on output.

-

int gsl_linalg_QR_lssolve(const gsl_matrix *QR, const gsl_vector *tau, const gsl_vector *b, gsl_vector *x, gsl_vector *residual)¶

-

int gsl_linalg_complex_QR_lssolve(const gsl_matrix_complex *QR, const gsl_vector_complex *tau, const gsl_vector_complex *b, gsl_vector_complex *x, gsl_vector_complex *residual)¶

These functions find the least squares solution to the overdetermined system

where the matrix

where the matrix Ahas more rows than columns. The least squares solution minimizes the Euclidean norm of the residual, .The routine requires as input

the

.The routine requires as input

the  decomposition

of

decomposition

of  into (

into (QR,tau) given bygsl_linalg_QR_decomp()orgsl_linalg_complex_QR_decomp(). The solution is returned inx. The residual is computed as a by-product and stored inresidual.

-

int gsl_linalg_QR_QTvec(const gsl_matrix *QR, const gsl_vector *tau, gsl_vector *v)¶

-

int gsl_linalg_complex_QR_QHvec(const gsl_matrix_complex *QR, const gsl_vector_complex *tau, gsl_vector_complex *v)¶

These functions apply the matrix

(or

(or  ) encoded in the decomposition

(

) encoded in the decomposition

(QR,tau) to the vectorv, storing the result (or

(or  ) in

) in v. The matrix multiplication is carried out directly using the encoding of the Householder vectors without needing to form the full matrix (or

(or  ).

).

-

int gsl_linalg_QR_Qvec(const gsl_matrix *QR, const gsl_vector *tau, gsl_vector *v)¶

-

int gsl_linalg_complex_QR_Qvec(const gsl_matrix_complex *QR, const gsl_vector_complex *tau, gsl_vector_complex *v)¶

These functions apply the matrix

encoded in the decomposition

(

encoded in the decomposition

(QR,tau) to the vectorv, storing the result in

in v. The matrix multiplication is carried out directly using the encoding of the Householder vectors without needing to form the full matrix .

.

-

int gsl_linalg_QR_QTmat(const gsl_matrix *QR, const gsl_vector *tau, gsl_matrix *B)¶

This function applies the matrix

encoded in the decomposition

(

encoded in the decomposition

(QR,tau) to the -by-

-by- matrix

matrix B, storing the result in

in B. The matrix multiplication is carried out directly using the encoding of the Householder vectors without needing to form the full matrix .

.

-

int gsl_linalg_QR_Rsolve(const gsl_matrix *QR, const gsl_vector *b, gsl_vector *x)¶

This function solves the triangular system

for

for

x. It may be useful if the product has already

been computed using

has already

been computed using gsl_linalg_QR_QTvec().

-

int gsl_linalg_QR_Rsvx(const gsl_matrix *QR, gsl_vector *x)¶

This function solves the triangular system

for

for xin-place. On inputxshould contain the right-hand side and is replaced by the solution on output. This function may be useful if

the product

and is replaced by the solution on output. This function may be useful if

the product  has already been computed using

has already been computed using

gsl_linalg_QR_QTvec().

-

int gsl_linalg_QR_unpack(const gsl_matrix *QR, const gsl_vector *tau, gsl_matrix *Q, gsl_matrix *R)¶

This function unpacks the encoded

decomposition

(

decomposition

(QR,tau) into the matricesQandR, whereQis -by-

-by- and

and Ris -by-

-by- .

.

-

int gsl_linalg_QR_QRsolve(gsl_matrix *Q, gsl_matrix *R, const gsl_vector *b, gsl_vector *x)¶

This function solves the system

for

for x. It can be used when the decomposition of a matrix is available in

unpacked form as (

decomposition of a matrix is available in

unpacked form as (Q,R).

-

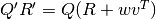

int gsl_linalg_QR_update(gsl_matrix *Q, gsl_matrix *R, gsl_vector *w, const gsl_vector *v)¶

This function performs a rank-1 update

of the

of the  decomposition (

decomposition (Q,R). The update is given by where the output matrices

where the output matrices  and

and  are also

orthogonal and right triangular. Note that

are also

orthogonal and right triangular. Note that wis destroyed by the update.

-

int gsl_linalg_R_solve(const gsl_matrix *R, const gsl_vector *b, gsl_vector *x)¶

This function solves the triangular system

for the

for the

-by-

-by- matrix

matrix R.

-

int gsl_linalg_R_svx(const gsl_matrix *R, gsl_vector *x)¶

This function solves the triangular system

in-place. On

input

in-place. On

input xshould contain the right-hand side , which is

replaced by the solution on output.

, which is

replaced by the solution on output.

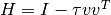

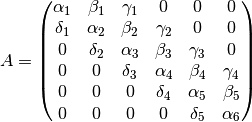

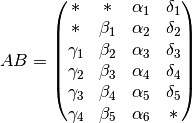

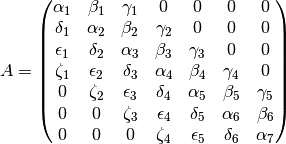

Triangle on Top of Rectangle¶

This section provides routines for computing the  decomposition of the

specialized matrix

decomposition of the

specialized matrix

where  is an

is an  -by-

-by- upper triangular matrix, and

upper triangular matrix, and  is

an

is

an  -by-

-by- dense matrix. This type of matrix arises, for example,

in the sequential TSQR algorithm. The Elmroth and Gustavson algorithm is used to

efficiently factor this matrix. Due to the upper triangular factor, the

dense matrix. This type of matrix arises, for example,

in the sequential TSQR algorithm. The Elmroth and Gustavson algorithm is used to

efficiently factor this matrix. Due to the upper triangular factor, the  matrix takes the form

matrix takes the form

with

and  is dense and of the same dimensions as

is dense and of the same dimensions as  .

.

-

int gsl_linalg_QR_UR_decomp(gsl_matrix *U, gsl_matrix *A, gsl_matrix *T)¶

This function computes the

decomposition of the matrix

decomposition of the matrix  , where

, where

is

is  -by-

-by- upper triangular and

upper triangular and  is

is  -by-

-by- dense. On output,

dense. On output,  is replaced by the

is replaced by the  factor, and

factor, and  is replaced

by

is replaced

by  . The

. The  -by-

-by- upper triangular block reflector is

stored in

upper triangular block reflector is

stored in Ton output.

-

int gsl_linalg_QR_UR_lssolve(const gsl_matrix *R, const gsl_matrix *Y, const gsl_matrix *T, const gsl_vector *b, gsl_vector *x, gsl_vector *work)¶

This function finds the least squares solution to the overdetermined system,

where

is a

is a  -by-

-by- upper triangular matrix, and

upper triangular matrix, and

is a

is a  -by-

-by- dense matrix.

The routine requires as input the

dense matrix.

The routine requires as input the  decomposition

of

decomposition

of  into (

into (R,Y,T) given bygsl_linalg_QR_UR_decomp(). The parameterxis of length .

The solution

.

The solution  is returned in the first

is returned in the first  rows of

rows of x, i.e.

x[0], x[1], ..., x[N-1]. The last rows

of

rows

of xcontain a vector whose norm is equal to the residual norm . This similar to the behavior of LAPACK DGELS.

Additional workspace of length

. This similar to the behavior of LAPACK DGELS.

Additional workspace of length  is required in

is required in work.

-

int gsl_linalg_QR_UR_lssvx(const gsl_matrix *R, const gsl_matrix *Y, const gsl_matrix *T, gsl_vector *x, gsl_vector *work)¶

This function finds the least squares solution to the overdetermined system,

in-place, where

is a

is a  -by-

-by- upper triangular matrix, and

upper triangular matrix, and

is a

is a  -by-

-by- dense matrix.

The routine requires as input the

dense matrix.

The routine requires as input the  decomposition

of

decomposition

of  into (

into (R,Y,T) given bygsl_linalg_QR_UR_decomp(). The parameterxis of length and contains the right

hand side vector

and contains the right

hand side vector  on input.

The solution

on input.

The solution  is returned in the first

is returned in the first  rows of

rows of x, i.e.

x[0], x[1], ..., x[N-1]. The last rows

of

rows

of xcontain a vector whose norm is equal to the residual norm . This similar to the behavior of LAPACK DGELS.

Additional workspace of length

. This similar to the behavior of LAPACK DGELS.

Additional workspace of length  is required in

is required in work.

-

int gsl_linalg_QR_UR_QTvec(const gsl_matrix *Y, const gsl_matrix *T, gsl_vector *b, gsl_vector *work)¶

This function computes

using the decomposition

(

using the decomposition

(Y,T) previously computed bygsl_linalg_QR_UR_decomp(). On input,bcontains the length vector

vector  , and on output it will contain

, and on output it will contain

. Additional workspace of length

. Additional workspace of length  is required in

is required in work.

Triangle on Top of Triangle¶

This section provides routines for computing the  decomposition of the

specialized matrix

decomposition of the

specialized matrix

where  are

are  -by-

-by- upper triangular matrices.

The Elmroth and Gustavson algorithm is used to efficiently factor this matrix.

The

upper triangular matrices.

The Elmroth and Gustavson algorithm is used to efficiently factor this matrix.

The  matrix takes the form

matrix takes the form

with

and  is

is  -by-

-by- upper triangular.

upper triangular.

-

int gsl_linalg_QR_UU_decomp(gsl_matrix *U1, gsl_matrix *U2, gsl_matrix *T)¶

This function computes the

decomposition of the matrix

decomposition of the matrix  , where

, where

are

are  -by-

-by- upper triangular. On output,

upper triangular. On output, U1is replaced by the factor, and

factor, and U2is replaced by . The

. The

-by-

-by- upper triangular block reflector is stored in

upper triangular block reflector is stored in Ton output.

-

int gsl_linalg_QR_UU_lssolve(const gsl_matrix *R, const gsl_matrix *Y, const gsl_matrix *T, const gsl_vector *b, gsl_vector *x, gsl_vector *work)¶

This function finds the least squares solution to the overdetermined system,

where

are

are  -by-

-by- upper triangular matrices.

The routine requires as input the

upper triangular matrices.

The routine requires as input the  decomposition

of

decomposition

of  into (

into (R,Y,T) given bygsl_linalg_QR_UU_decomp(). The parameterxis of length .

The solution

.

The solution  is returned in the first

is returned in the first  rows of

rows of x, i.e.

x[0], x[1], ..., x[N-1]. The last rows

of

rows

of xcontain a vector whose norm is equal to the residual norm . This similar to the behavior of LAPACK DGELS.

Additional workspace of length

. This similar to the behavior of LAPACK DGELS.

Additional workspace of length  is required in

is required in work.

-

int gsl_linalg_QR_UU_lssvx(const gsl_matrix *R, const gsl_matrix *Y, const gsl_matrix *T, gsl_vector *x, gsl_vector *work)¶

This function finds the least squares solution to the overdetermined system,

in-place, where

are

are  -by-

-by- upper triangular matrices.

The routine requires as input the

upper triangular matrices.

The routine requires as input the  decomposition

of

decomposition

of  into (

into (R,Y,T) given bygsl_linalg_QR_UU_decomp(). The parameterxis of length and contains the right hand

side vector

and contains the right hand

side vector  on input.

The solution

on input.

The solution  is returned in the first

is returned in the first  rows of

rows of x, i.e.

x[0], x[1], ..., x[N-1]. The last rows

of

rows

of xcontain a vector whose norm is equal to the residual norm . This similar to the behavior of LAPACK DGELS.

Additional workspace of length

. This similar to the behavior of LAPACK DGELS.

Additional workspace of length  is required in

is required in work.

-

int gsl_linalg_QR_UU_QTvec(const gsl_matrix *Y, const gsl_matrix *T, gsl_vector *b, gsl_vector *work)¶

This function computes

using the decomposition

(

using the decomposition

(Y,T) previously computed bygsl_linalg_QR_UU_decomp(). On input,bcontains the vector , and on output it will contain

, and on output it will contain

. Additional workspace of length

. Additional workspace of length  is required in

is required in work.

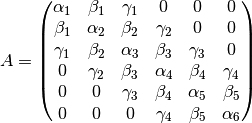

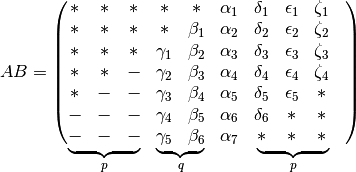

Triangle on Top of Trapezoidal¶

This section provides routines for computing the  decomposition of the

specialized matrix

decomposition of the

specialized matrix

where  is an

is an  -by-

-by- upper triangular matrix, and

upper triangular matrix, and  is

an

is

an  -by-

-by- upper trapezoidal matrix with

upper trapezoidal matrix with  .

.  has

the structure,

has

the structure,

where  is

is  -by-

-by- dense, and

dense, and  is

is

-by-

-by- upper triangular.

The Elmroth and Gustavson algorithm is used to efficiently factor this matrix.

The

upper triangular.

The Elmroth and Gustavson algorithm is used to efficiently factor this matrix.

The  matrix takes the form

matrix takes the form

with

and  is upper trapezoidal and of the same dimensions as

is upper trapezoidal and of the same dimensions as  .

.

-

int gsl_linalg_QR_UZ_decomp(gsl_matrix *U, gsl_matrix *A, gsl_matrix *T)¶

This function computes the

decomposition of the matrix

decomposition of the matrix  , where

, where

is

is  -by-

-by- upper triangular and

upper triangular and  is

is  -by-

-by- upper trapezoidal. On output,

upper trapezoidal. On output,  is replaced by the

is replaced by the  factor, and

factor, and  is replaced by

is replaced by  . The

. The  -by-

-by- upper triangular block reflector is

stored in

upper triangular block reflector is

stored in Ton output.

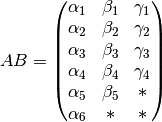

Triangle on Top of Diagonal¶

This section provides routines for computing the  decomposition of the

specialized matrix

decomposition of the

specialized matrix

where  is an

is an  -by-

-by- upper triangular matrix and

upper triangular matrix and

is an

is an  -by-

-by- diagonal matrix. This type of matrix

arises in regularized least squares problems.

The Elmroth and Gustavson algorithm is used to efficiently factor this matrix.

The

diagonal matrix. This type of matrix

arises in regularized least squares problems.

The Elmroth and Gustavson algorithm is used to efficiently factor this matrix.

The  matrix takes the form

matrix takes the form

with

and  is

is  -by-

-by- upper triangular.

upper triangular.

-

int gsl_linalg_QR_UD_decomp(gsl_matrix *U, const gsl_vector *D, gsl_matrix *Y, gsl_matrix *T)¶

This function computes the

decomposition of the matrix

decomposition of the matrix  , where

, where

is

is  -by-

-by- upper triangular and

upper triangular and  is

is

-by-

-by- diagonal. On output,

diagonal. On output, Uis replaced by the factor and

factor and  is stored in

is stored in Y. The -by-

-by- upper triangular block reflector is stored in

upper triangular block reflector is stored in Ton output.

-

int gsl_linalg_QR_UD_lssolve(const gsl_matrix *R, const gsl_matrix *Y, const gsl_matrix *T, const gsl_vector *b, gsl_vector *x, gsl_vector *work)¶

This function finds the least squares solution to the overdetermined system,

where

is

is  -by-

-by- upper triangular and

upper triangular and  is

is

-by-

-by- diagonal. The routine requires as input

the

diagonal. The routine requires as input

the  decomposition of

decomposition of  into (

into (R,Y,T) given bygsl_linalg_QR_UD_decomp(). The parameterxis of length .

The solution

.

The solution  is returned in the first

is returned in the first  rows of

rows of x, i.e.

x[0], x[1], ..., x[N-1]. The last rows

of

rows

of xcontain a vector whose norm is equal to the residual norm . This similar to the behavior of LAPACK DGELS.

Additional workspace of length

. This similar to the behavior of LAPACK DGELS.

Additional workspace of length  is required in

is required in work.

-

int gsl_linalg_QR_UD_lssvx(const gsl_matrix *R, const gsl_matrix *Y, const gsl_matrix *T, gsl_vector *x, gsl_vector *work)¶

This function finds the least squares solution to the overdetermined system,

in-place, where

is

is  -by-

-by- upper triangular and

upper triangular and  is

is

-by-

-by- diagonal. The routine requires as input

the

diagonal. The routine requires as input

the  decomposition of

decomposition of  into (

into (R,Y,T) given bygsl_linalg_QR_UD_decomp(). The parameterxis of length and contains the right hand side

vector

and contains the right hand side

vector  on input.

The solution

on input.

The solution  is returned in the first

is returned in the first  rows of

rows of x, i.e.

x[0], x[1], ..., x[N-1]. The last rows

of

rows

of xcontain a vector whose norm is equal to the residual norm . This similar to the behavior of LAPACK DGELS.

Additional workspace of length

. This similar to the behavior of LAPACK DGELS.

Additional workspace of length  is required in

is required in work.

-

int gsl_linalg_QR_UD_QTvec(const gsl_matrix *Y, const gsl_matrix *T, gsl_vector *b, gsl_vector *work)¶

This function computes

using the decomposition

(

using the decomposition

(Y,T) previously computed bygsl_linalg_QR_UD_decomp(). On input,bcontains the vector , and on output it will contain

, and on output it will contain

. Additional workspace of length

. Additional workspace of length  is required in

is required in work.

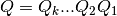

QR Decomposition with Column Pivoting¶

The  decomposition of an

decomposition of an  -by-

-by- matrix

matrix  can be extended to the rank deficient case by introducing a column permutation

can be extended to the rank deficient case by introducing a column permutation  ,

,

The first  columns of

columns of  form an orthonormal basis

for the range of

form an orthonormal basis

for the range of  for a matrix with column rank

for a matrix with column rank  . This

decomposition can also be used to convert the square linear system

. This

decomposition can also be used to convert the square linear system  into the triangular system

into the triangular system  , which can be

solved by back-substitution and permutation. We denote the

, which can be

solved by back-substitution and permutation. We denote the  decomposition with column pivoting by

decomposition with column pivoting by  since

since  .

When

.

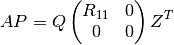

When  is rank deficient with

is rank deficient with  , the matrix

, the matrix

can be partitioned as

can be partitioned as

where  is

is  -by-

-by- and nonsingular. In this case,

a basic least squares solution for the overdetermined system

and nonsingular. In this case,

a basic least squares solution for the overdetermined system  can be obtained as

can be obtained as

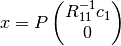

where  consists of the first

consists of the first  elements of

elements of  .

This basic solution is not guaranteed to be the minimum norm solution unless

.

This basic solution is not guaranteed to be the minimum norm solution unless

(see Complete Orthogonal Decomposition).

(see Complete Orthogonal Decomposition).

-

int gsl_linalg_QRPT_decomp(gsl_matrix *A, gsl_vector *tau, gsl_permutation *p, int *signum, gsl_vector *norm)¶

This function factorizes the

-by-

-by- matrix

matrix Ainto the decomposition

decomposition  . On output the

diagonal and upper triangular part of the input matrix contain the

matrix

. On output the

diagonal and upper triangular part of the input matrix contain the

matrix  . The permutation matrix

. The permutation matrix  is stored in the

permutation

is stored in the

permutation p. The sign of the permutation is given bysignum. It has the value , where

, where  is the

number of interchanges in the permutation. The vector

is the

number of interchanges in the permutation. The vector tauand the columns of the lower triangular part of the matrixAcontain the Householder coefficients and vectors which encode the orthogonal matrixQ. The vectortaumust be of length . The

matrix

. The

matrix  is related to these components by,

is related to these components by,  where

where  and

and  is the

Householder vector

is the

Householder vector

This is the same storage scheme as used by LAPACK. The vector

normis a workspace of lengthNused for column pivoting.The algorithm used to perform the decomposition is Householder QR with column pivoting (Golub & Van Loan, “Matrix Computations”, Algorithm 5.4.1).

-

int gsl_linalg_QRPT_decomp2(const gsl_matrix *A, gsl_matrix *q, gsl_matrix *r, gsl_vector *tau, gsl_permutation *p, int *signum, gsl_vector *norm)¶

This function factorizes the matrix

Ainto the decomposition without modifying

without modifying Aitself and storing the output in the separate matricesqandr.

-

int gsl_linalg_QRPT_solve(const gsl_matrix *QR, const gsl_vector *tau, const gsl_permutation *p, const gsl_vector *b, gsl_vector *x)¶

This function solves the square system

using the

using the  decomposition of

decomposition of  held in (

held in (QR,tau,p) which must have been computed previously bygsl_linalg_QRPT_decomp().

-

int gsl_linalg_QRPT_svx(const gsl_matrix *QR, const gsl_vector *tau, const gsl_permutation *p, gsl_vector *x)¶

This function solves the square system

in-place using the

in-place using the

decomposition of

decomposition of  held in

(

held in

(QR,tau,p). On inputxshould contain the right-hand side , which is replaced by the solution on output.

, which is replaced by the solution on output.

-

int gsl_linalg_QRPT_lssolve(const gsl_matrix *QR, const gsl_vector *tau, const gsl_permutation *p, const gsl_vector *b, gsl_vector *x, gsl_vector *residual)¶

This function finds the least squares solution to the overdetermined system

where the matrix

where the matrix Ahas more rows than columns and is assumed to have full rank. The least squares solution minimizes the Euclidean norm of the residual, . The routine requires as input

the

. The routine requires as input

the  decomposition of

decomposition of  into (

into (QR,tau,p) given bygsl_linalg_QRPT_decomp(). The solution is returned inx. The residual is computed as a by-product and stored inresidual. For rank deficient matrices,gsl_linalg_QRPT_lssolve2()should be used instead.

-

int gsl_linalg_QRPT_lssolve2(const gsl_matrix *QR, const gsl_vector *tau, const gsl_permutation *p, const gsl_vector *b, const size_t rank, gsl_vector *x, gsl_vector *residual)¶

This function finds the least squares solution to the overdetermined system

where the matrix

where the matrix Ahas more rows than columns and has rank given by the inputrank. If the user does not know the rank of , the routine

, the routine gsl_linalg_QRPT_rank()can be called to estimate it. The least squares solution is the so-called “basic” solution discussed above and may not be the minimum norm solution. The routine requires as input the decomposition of

decomposition of  into (

into (QR,tau,p) given bygsl_linalg_QRPT_decomp(). The solution is returned inx. The residual is computed as a by-product and stored inresidual.

-

int gsl_linalg_QRPT_QRsolve(const gsl_matrix *Q, const gsl_matrix *R, const gsl_permutation *p, const gsl_vector *b, gsl_vector *x)¶

This function solves the square system

for

for

x. It can be used when the decomposition of a matrix is

available in unpacked form as (

decomposition of a matrix is

available in unpacked form as (Q,R).

-

int gsl_linalg_QRPT_update(gsl_matrix *Q, gsl_matrix *R, const gsl_permutation *p, gsl_vector *w, const gsl_vector *v)¶

This function performs a rank-1 update

of the

of the  decomposition (

decomposition (Q,R,p). The update is given by where the output matrices

where the output matrices  and

and

are also orthogonal and right triangular. Note that

are also orthogonal and right triangular. Note that wis destroyed by the update. The permutationpis not changed.

-

int gsl_linalg_QRPT_Rsolve(const gsl_matrix *QR, const gsl_permutation *p, const gsl_vector *b, gsl_vector *x)¶

This function solves the triangular system

for the

for the

-by-

-by- matrix

matrix  contained in

contained in QR.

-

int gsl_linalg_QRPT_Rsvx(const gsl_matrix *QR, const gsl_permutation *p, gsl_vector *x)¶

This function solves the triangular system

in-place

for the

in-place

for the  -by-

-by- matrix

matrix  contained in

contained in QR. On inputxshould contain the right-hand side , which is

replaced by the solution on output.

, which is

replaced by the solution on output.

-

size_t gsl_linalg_QRPT_rank(const gsl_matrix *QR, const double tol)¶

This function estimates the rank of the triangular matrix

contained in

contained in QR. The algorithm simply counts the number of diagonal elements of whose absolute value

is greater than the specified tolerance

whose absolute value

is greater than the specified tolerance tol. If the inputtolis negative, a default value of is used.

is used.

-

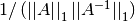

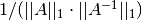

int gsl_linalg_QRPT_rcond(const gsl_matrix *QR, double *rcond, gsl_vector *work)¶

This function estimates the reciprocal condition number (using the 1-norm) of the

factor,

stored in the upper triangle of

factor,

stored in the upper triangle of QR. The reciprocal condition number estimate, defined as , is stored in

, is stored in rcond. Additional workspace of size is required in

is required in work.

LQ Decomposition¶

A general rectangular  -by-

-by- matrix

matrix  has a

has a

decomposition into the product of a lower trapezoidal

decomposition into the product of a lower trapezoidal

-by-

-by- matrix

matrix  and an orthogonal

and an orthogonal

-by-

-by- square matrix

square matrix  :

:

If  , then

, then  can be written as

can be written as  where

where

is

is  -by-

-by- lower triangular,

and

lower triangular,

and

where  consists of the first

consists of the first  rows of

rows of  , and

, and  contains the remaining

contains the remaining  rows. The

rows. The  factorization of

factorization of  is essentially the same as the QR factorization of

is essentially the same as the QR factorization of  .

.

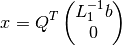

The  factorization may be used to find the minimum norm solution of

an underdetermined system of equations

factorization may be used to find the minimum norm solution of

an underdetermined system of equations  , where

, where  is

is

-by-

-by- and

and  . The solution is

. The solution is

-

int gsl_linalg_LQ_decomp(gsl_matrix *A, gsl_vector *tau)¶

This function factorizes the

-by-

-by- matrix

matrix Ainto the decomposition

decomposition  . On output the diagonal and

lower trapezoidal part of the input matrix contain the matrix

. On output the diagonal and

lower trapezoidal part of the input matrix contain the matrix

. The vector

. The vector tauand the elements above the diagonal of the matrixAcontain the Householder coefficients and Householder vectors which encode the orthogonal matrixQ. The vectortaumust be of length . The matrix

. The matrix

is related to these components by,

is related to these components by,  where

where  and

and  is the

Householder vector

is the

Householder vector  .

This is the same storage scheme as used by LAPACK.

.

This is the same storage scheme as used by LAPACK.

-

int gsl_linalg_LQ_lssolve(const gsl_matrix *LQ, const gsl_vector *tau, const gsl_vector *b, gsl_vector *x, gsl_vector *residual)¶

This function finds the minimum norm least squares solution to the underdetermined system

,

where the

,

where the  -by-

-by- matrix

matrix Ahas .

The routine requires as input the

.

The routine requires as input the  decomposition of

decomposition of  into (

into (LQ,tau) given bygsl_linalg_LQ_decomp(). The solution is returned inx. The residual, , is computed as a by-product and stored in

, is computed as a by-product and stored in residual.

-

int gsl_linalg_LQ_unpack(const gsl_matrix *LQ, const gsl_vector *tau, gsl_matrix *Q, gsl_matrix *L)¶

This function unpacks the encoded

decomposition

(

decomposition

(LQ,tau) into the matricesQandL, whereQis -by-

-by- and

and Lis -by-

-by- .

.

-

int gsl_linalg_LQ_QTvec(const gsl_matrix *LQ, const gsl_vector *tau, gsl_vector *v)¶

This function applies

to the vector

to the vector v, storing the result in

in

von output.

QL Decomposition¶

A general rectangular  -by-

-by- matrix

matrix  has a

has a

decomposition into the product of an orthogonal

decomposition into the product of an orthogonal

-by-

-by- square matrix

square matrix  (where

(where  ) and

an

) and

an  -by-

-by- left-triangular matrix

left-triangular matrix  .

.

When  , the decomposition is given by

, the decomposition is given by

where  is

is  -by-

-by- lower triangular. When

lower triangular. When

, the decomposition is given by

, the decomposition is given by

where  is a dense

is a dense  -by-

-by- matrix and

matrix and

is a lower triangular

is a lower triangular  -by-

-by- matrix.

matrix.

-

int gsl_linalg_QL_decomp(gsl_matrix *A, gsl_vector *tau)¶

This function factorizes the

-by-

-by- matrix

matrix Ainto the decomposition

decomposition  .

The vector

.

The vector taumust be of length and contains the Householder

coefficients on output.

The matrix

and contains the Householder

coefficients on output.

The matrix  is stored in packed form in

is stored in packed form in Aon output, using the same storage scheme as LAPACK.

-

int gsl_linalg_QL_unpack(const gsl_matrix *QL, const gsl_vector *tau, gsl_matrix *Q, gsl_matrix *L)¶

This function unpacks the encoded

decomposition

(

decomposition

(QL,tau) into the matricesQandL, whereQis -by-

-by- and

and Lis -by-

-by- .

.

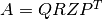

Complete Orthogonal Decomposition¶

The complete orthogonal decomposition of a  -by-

-by- matrix

matrix

is a generalization of the QR decomposition with column pivoting, given by

is a generalization of the QR decomposition with column pivoting, given by

where  is a

is a  -by-

-by- permutation matrix,

permutation matrix,

is

is  -by-

-by- orthogonal,

orthogonal,  is

is

-by-

-by- upper triangular, with

upper triangular, with  ,

and

,

and  is

is  -by-

-by- orthogonal. If

orthogonal. If  has full rank, then

has full rank, then  ,

,  and this reduces to the

QR decomposition with column pivoting.

and this reduces to the

QR decomposition with column pivoting.

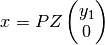

For a rank deficient least squares problem,  , the solution vector

, the solution vector

is not unique. However if we further require that

is not unique. However if we further require that  is minimized,

then the complete orthogonal decomposition gives us the ability to compute

the unique minimum norm solution, which is given by

is minimized,

then the complete orthogonal decomposition gives us the ability to compute

the unique minimum norm solution, which is given by

and the vector  is the first

is the first  elements of

elements of  .

.

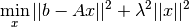

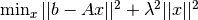

The COD also enables a straightforward solution of regularized least squares problems in Tikhonov standard form, written as

where  is a regularization parameter which represents a tradeoff between

minimizing the residual norm

is a regularization parameter which represents a tradeoff between

minimizing the residual norm  and the solution norm

and the solution norm  . For this system,

the solution is given by

. For this system,

the solution is given by

where  is a vector of length

is a vector of length  which is found by solving

which is found by solving

and  is defined above. The equation above can be solved efficiently for different

values of

is defined above. The equation above can be solved efficiently for different

values of  using QR factorizations of the left hand side matrix.

using QR factorizations of the left hand side matrix.

-

int gsl_linalg_COD_decomp(gsl_matrix *A, gsl_vector *tau_Q, gsl_vector *tau_Z, gsl_permutation *p, size_t *rank, gsl_vector *work)¶

-

int gsl_linalg_COD_decomp_e(gsl_matrix *A, gsl_vector *tau_Q, gsl_vector *tau_Z, gsl_permutation *p, double tol, size_t *rank, gsl_vector *work)¶

These functions factor the

-by-

-by- matrix

matrix Ainto the decomposition . The rank of

. The rank of Ais computed as the number of diagonal elements of greater than the tolerance

greater than the tolerance toland output inrank. Iftolis not specified, a default value is used (seegsl_linalg_QRPT_rank()). On output, the permutation matrix is stored in

is stored in p. The matrix is stored in the upper

is stored in the upper rank-by-rankblock ofA. The matrices and

and  are encoded in packed storage in

are encoded in packed storage in Aon output. The vectorstau_Qandtau_Zcontain the Householder scalars corresponding to the matrices and

and  respectively and must be

of length

respectively and must be

of length  . The vector

. The vector workis additional workspace of length .

.

-

int gsl_linalg_COD_lssolve(const gsl_matrix *QRZT, const gsl_vector *tau_Q, const gsl_vector *tau_Z, const gsl_permutation *p, const size_t rank, const gsl_vector *b, gsl_vector *x, gsl_vector *residual)¶

This function finds the unique minimum norm least squares solution to the overdetermined system

where the matrix

where the matrix Ahas more rows than columns. The least squares solution minimizes the Euclidean norm of the residual, as well as the norm of the solution

as well as the norm of the solution  . The routine requires as input

the

. The routine requires as input

the  decomposition of

decomposition of  into (

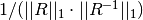

into (QRZT,tau_Q,tau_Z,p,rank) given bygsl_linalg_COD_decomp(). The solution is returned inx. The residual, , is computed as a by-product and stored in

, is computed as a by-product and stored in residual.

-

int gsl_linalg_COD_lssolve2(const double lambda, const gsl_matrix *QRZT, const gsl_vector *tau_Q, const gsl_vector *tau_Z, const gsl_permutation *p, const size_t rank, const gsl_vector *b, gsl_vector *x, gsl_vector *residual, gsl_matrix *S, gsl_vector *work)¶

This function finds the solution to the regularized least squares problem in Tikhonov standard form,

. The routine requires as input

the

. The routine requires as input

the  decomposition of

decomposition of  into (

into (QRZT,tau_Q,tau_Z,p,rank) given bygsl_linalg_COD_decomp(). The parameter is supplied in

is supplied in lambda. The solution is returned inx. The residual, , is stored in

, is stored in residualon output.Sis additional workspace of sizerank-by-rank.workis additional workspace of lengthrank.

-

int gsl_linalg_COD_unpack(const gsl_matrix *QRZT, const gsl_vector *tau_Q, const gsl_vector *tau_Z, const size_t rank, gsl_matrix *Q, gsl_matrix *R, gsl_matrix *Z)¶

This function unpacks the encoded

decomposition

(

decomposition

(QRZT,tau_Q,tau_Z,rank) into the matricesQ,R, andZ, whereQis -by-

-by- ,

,

Ris -by-

-by- , and

, and Zis -by-

-by- .

.

-

int gsl_linalg_COD_matZ(const gsl_matrix *QRZT, const gsl_vector *tau_Z, const size_t rank, gsl_matrix *A, gsl_vector *work)¶

This function multiplies the input matrix

Aon the right byZ, using the encoded

using the encoded  decomposition

(

decomposition

(QRZT,tau_Z,rank).Amust have columns but may

have any number of rows. Additional workspace of length

columns but may

have any number of rows. Additional workspace of length  is provided

in

is provided

in work.

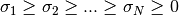

Singular Value Decomposition¶

A general rectangular  -by-

-by- matrix

matrix  has a

singular value decomposition (SVD) into the product of an

has a

singular value decomposition (SVD) into the product of an

-by-

-by- orthogonal matrix

orthogonal matrix  , an

, an  -by-

-by- diagonal matrix of singular values

diagonal matrix of singular values  and the transpose of an

and the transpose of an

-by-

-by- orthogonal square matrix

orthogonal square matrix  ,

,

The singular values  are all non-negative and are

generally chosen to form a non-increasing sequence

are all non-negative and are

generally chosen to form a non-increasing sequence

The singular value decomposition of a matrix has many practical uses. The condition number of the matrix is given by the ratio of the largest singular value to the smallest singular value. The presence of a zero singular value indicates that the matrix is singular. The number of non-zero singular values indicates the rank of the matrix. In practice singular value decomposition of a rank-deficient matrix will not produce exact zeroes for singular values, due to finite numerical precision. Small singular values should be edited by choosing a suitable tolerance.

For a rank-deficient matrix, the null space of  is given by

the columns of

is given by

the columns of  corresponding to the zero singular values.

Similarly, the range of

corresponding to the zero singular values.

Similarly, the range of  is given by columns of

is given by columns of  corresponding to the non-zero singular values.

corresponding to the non-zero singular values.

Note that the routines here compute the “thin” version of the SVD

with  as

as  -by-

-by- orthogonal matrix. This allows

in-place computation and is the most commonly-used form in practice.

Mathematically, the “full” SVD is defined with

orthogonal matrix. This allows

in-place computation and is the most commonly-used form in practice.

Mathematically, the “full” SVD is defined with  as an

as an

-by-

-by- orthogonal matrix and

orthogonal matrix and  as an

as an

-by-

-by- diagonal matrix (with additional rows of zeros).

diagonal matrix (with additional rows of zeros).

-

int gsl_linalg_SV_decomp(gsl_matrix *A, gsl_matrix *V, gsl_vector *S, gsl_vector *work)¶

This function factorizes the

-by-

-by- matrix

matrix Ainto the singular value decomposition for

for  .

On output the matrix

.

On output the matrix Ais replaced by . The diagonal elements of the singular value matrix

. The diagonal elements of the singular value matrix  are stored in the vector

are stored in the vector S. The singular values are non-negative and form a non-increasing sequence from to

to  . The

matrix

. The

matrix Vcontains the elements of in untransposed

form. To form the product

in untransposed

form. To form the product  it is necessary to take the

transpose of

it is necessary to take the

transpose of V. A workspace of lengthNis required inwork.This routine uses the Golub-Reinsch SVD algorithm.

-

int gsl_linalg_SV_decomp_mod(gsl_matrix *A, gsl_matrix *X, gsl_matrix *V, gsl_vector *S, gsl_vector *work)¶

This function computes the SVD using the modified Golub-Reinsch algorithm, which is faster for

.

It requires the vector

.

It requires the vector workof lengthNand the -by-

-by- matrix

matrix Xas additional working space.

-

int gsl_linalg_SV_decomp_jacobi(gsl_matrix *A, gsl_matrix *V, gsl_vector *S)¶

This function computes the SVD of the

-by-

-by- matrix

matrix Ausing one-sided Jacobi orthogonalization for .

The Jacobi method can compute singular values to higher

relative accuracy than Golub-Reinsch algorithms (see references for

details).

.

The Jacobi method can compute singular values to higher

relative accuracy than Golub-Reinsch algorithms (see references for

details).

-

int gsl_linalg_SV_solve(const gsl_matrix *U, const gsl_matrix *V, const gsl_vector *S, const gsl_vector *b, gsl_vector *x)¶

This function solves the system

using the singular value

decomposition (

using the singular value

decomposition (U,S,V) of which must

have been computed previously with

which must

have been computed previously with gsl_linalg_SV_decomp().Only non-zero singular values are used in computing the solution. The parts of the solution corresponding to singular values of zero are ignored. Other singular values can be edited out by setting them to zero before calling this function.

In the over-determined case where

Ahas more rows than columns the system is solved in the least squares sense, returning the solutionxwhich minimizes .

.

-

int gsl_linalg_SV_solve2(const double tol, const gsl_matrix *U, const gsl_matrix *V, const gsl_vector *S, const gsl_vector *b, gsl_vector *x, gsl_vector *work)¶

This function solves the system

using the singular value

decomposition (

using the singular value

decomposition (U,S,V) of which must

have been computed previously with

which must

have been computed previously with gsl_linalg_SV_decomp().Singular values which satisfy,

are excluded

from the solution. Additional workspace of length

are excluded

from the solution. Additional workspace of length  must be provided in

must be provided in

work.In the over-determined case where

Ahas more rows than columns the system is solved in the least squares sense, returning the solutionxwhich minimizes .

.

-

int gsl_linalg_SV_lssolve(const double lambda, const gsl_matrix *U, const gsl_matrix *V, const gsl_vector *S, const gsl_vector *b, gsl_vector *x, double *rnorm, gsl_vector *work)¶

This function solves the regularized least squares problem,

using the singular value decomposition (

U,S,V) of which must have been computed previously with

which must have been computed previously with gsl_linalg_SV_decomp(). The residual norm is stored in

is stored in rnormon output. Additional workspace of size is required in

is required in work.

-

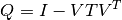

int gsl_linalg_SV_leverage(const gsl_matrix *U, gsl_vector *h)¶

This function computes the statistical leverage values

of a matrix

of a matrix  using its singular value decomposition (

using its singular value decomposition (U,S,V) previously computed withgsl_linalg_SV_decomp(). are the diagonal values of the matrix

are the diagonal values of the matrix

and depend only on the matrix

and depend only on the matrix Uwhich is the input to this function.

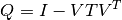

Cholesky Decomposition¶

A symmetric, positive definite square matrix  has a Cholesky

decomposition into a product of a lower triangular matrix

has a Cholesky

decomposition into a product of a lower triangular matrix  and

its transpose

and

its transpose  ,

,

This is sometimes referred to as taking the square-root of a matrix. The

Cholesky decomposition can only be carried out when all the eigenvalues

of the matrix are positive. This decomposition can be used to convert

the linear system  into a pair of triangular systems

(

into a pair of triangular systems

( ,

,  ), which can be solved by forward and

back-substitution.

), which can be solved by forward and

back-substitution.

If the matrix  is near singular, it is sometimes possible to reduce

the condition number and recover a more accurate solution vector

is near singular, it is sometimes possible to reduce

the condition number and recover a more accurate solution vector  by scaling as

by scaling as

where  is a diagonal matrix whose elements are given by

is a diagonal matrix whose elements are given by

. This scaling is also known as

Jacobi preconditioning. There are routines below to solve

both the scaled and unscaled systems.

. This scaling is also known as

Jacobi preconditioning. There are routines below to solve

both the scaled and unscaled systems.

-

int gsl_linalg_cholesky_decomp1(gsl_matrix *A)¶

-

int gsl_linalg_complex_cholesky_decomp(gsl_matrix_complex *A)¶

These functions factorize the symmetric, positive-definite square matrix

Ainto the Cholesky decomposition (or

(or

for the complex case). On input, the values from the diagonal and lower-triangular

part of the matrix

for the complex case). On input, the values from the diagonal and lower-triangular

part of the matrix Aare used (the upper triangular part is ignored). On output the diagonal and lower triangular part of the input matrixAcontain the matrix , while the upper triangular part contains the original matrix. If the matrix is not

positive-definite then the decomposition will fail, returning the

error code

, while the upper triangular part contains the original matrix. If the matrix is not

positive-definite then the decomposition will fail, returning the

error code GSL_EDOM.When testing whether a matrix is positive-definite, disable the error handler first to avoid triggering an error. These functions use Level 3 BLAS to compute the Cholesky factorization (Peise and Bientinesi, 2016).

-

int gsl_linalg_cholesky_decomp(gsl_matrix *A)¶

This function is now deprecated and is provided only for backward compatibility.

-

int gsl_linalg_cholesky_solve(const gsl_matrix *cholesky, const gsl_vector *b, gsl_vector *x)¶

-

int gsl_linalg_complex_cholesky_solve(const gsl_matrix_complex *cholesky, const gsl_vector_complex *b, gsl_vector_complex *x)¶

These functions solve the system

using the Cholesky

decomposition of

using the Cholesky

decomposition of  held in the matrix

held in the matrix choleskywhich must have been previously computed bygsl_linalg_cholesky_decomp()orgsl_linalg_complex_cholesky_decomp().

-

int gsl_linalg_cholesky_svx(const gsl_matrix *cholesky, gsl_vector *x)¶

-

int gsl_linalg_complex_cholesky_svx(const gsl_matrix_complex *cholesky, gsl_vector_complex *x)¶

These functions solve the system

in-place using the

Cholesky decomposition of

in-place using the

Cholesky decomposition of  held in the matrix

held in the matrix choleskywhich must have been previously computed bygsl_linalg_cholesky_decomp()orgsl_linalg_complex_cholesky_decomp(). On inputxshould contain the right-hand side , which is replaced by the

solution on output.

, which is replaced by the

solution on output.

-

int gsl_linalg_cholesky_invert(gsl_matrix *cholesky)¶

-

int gsl_linalg_complex_cholesky_invert(gsl_matrix_complex *cholesky)¶

These functions compute the inverse of a matrix from its Cholesky decomposition

cholesky, which must have been previously computed bygsl_linalg_cholesky_decomp()orgsl_linalg_complex_cholesky_decomp(). On output, the inverse is stored in-place incholesky.

-

int gsl_linalg_cholesky_decomp2(gsl_matrix *A, gsl_vector *S)¶

-

int gsl_linalg_complex_cholesky_decomp2(gsl_matrix_complex *A, gsl_vector *S)¶

This function calculates a diagonal scaling transformation

for

the symmetric, positive-definite square matrix

for

the symmetric, positive-definite square matrix A, and then computes the Cholesky decomposition .

On input, the values from the diagonal and lower-triangular part of the matrix

.

On input, the values from the diagonal and lower-triangular part of the matrix Aare used (the upper triangular part is ignored). On output the diagonal and lower triangular part of the input matrixAcontain the matrix .

If the matrix is not positive-definite then the decomposition will fail, returning the

error code

.

If the matrix is not positive-definite then the decomposition will fail, returning the

error code GSL_EDOM. The diagonal scale factors are stored inSon output.When testing whether a matrix is positive-definite, disable the error handler first to avoid triggering an error.

-

int gsl_linalg_cholesky_solve2(const gsl_matrix *cholesky, const gsl_vector *S, const gsl_vector *b, gsl_vector *x)¶

-

int gsl_linalg_complex_cholesky_solve2(const gsl_matrix_complex *cholesky, const gsl_vector *S, const gsl_vector_complex *b, gsl_vector_complex *x)¶

This function solves the system

using the Cholesky

decomposition of

using the Cholesky

decomposition of  held in the matrix

held in the matrix choleskywhich must have been previously computed bygsl_linalg_cholesky_decomp2()orgsl_linalg_complex_cholesky_decomp2().

-

int gsl_linalg_cholesky_svx2(const gsl_matrix *cholesky, const gsl_vector *S, gsl_vector *x)¶

-

int gsl_linalg_complex_cholesky_svx2(const gsl_matrix_complex *cholesky, const gsl_vector *S, gsl_vector_complex *x)¶

This function solves the system

in-place using the

Cholesky decomposition of

in-place using the

Cholesky decomposition of  held in the matrix

held in the matrix choleskywhich must have been previously computed bygsl_linalg_cholesky_decomp2()orgsl_linalg_complex_cholesky_decomp2(). On inputxshould contain the right-hand side , which is replaced by the

solution on output.

, which is replaced by the

solution on output.

-

int gsl_linalg_cholesky_scale(const gsl_matrix *A, gsl_vector *S)¶

-

int gsl_linalg_complex_cholesky_scale(const gsl_matrix_complex *A, gsl_vector *S)¶

This function calculates a diagonal scaling transformation of the symmetric, positive definite matrix

A, such that has a condition number within a factor of

has a condition number within a factor of  of the matrix of smallest possible condition number over all

possible diagonal scalings. On output,

of the matrix of smallest possible condition number over all

possible diagonal scalings. On output, Scontains the scale factors, given by .

For any

.

For any  , the corresponding scale factor

, the corresponding scale factor  is set to

is set to  .

.

-

int gsl_linalg_cholesky_scale_apply(gsl_matrix *A, const gsl_vector *S)¶

-

int gsl_linalg_complex_cholesky_scale_apply(gsl_matrix_complex *A, const gsl_vector *S)¶

This function applies the scaling transformation

Sto the matrixA. On output,Ais replaced by .

.

-

int gsl_linalg_cholesky_rcond(const gsl_matrix *cholesky, double *rcond, gsl_vector *work)¶

This function estimates the reciprocal condition number (using the 1-norm) of the symmetric positive definite matrix

, using its Cholesky decomposition provided in

, using its Cholesky decomposition provided in cholesky. The reciprocal condition number estimate, defined as , is stored

in

, is stored

in rcond. Additional workspace of size is required in

is required in work.

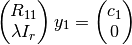

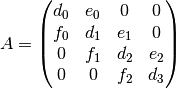

Pivoted Cholesky Decomposition¶

A symmetric positive semi-definite square matrix  has an alternate

Cholesky decomposition into a product of a lower unit triangular matrix

has an alternate

Cholesky decomposition into a product of a lower unit triangular matrix  ,

a diagonal matrix

,

a diagonal matrix  and

and  , given by

, given by  . For

postive definite matrices, this is equivalent to the Cholesky formulation discussed

above, with the standard Cholesky lower triangular factor given by

. For

postive definite matrices, this is equivalent to the Cholesky formulation discussed

above, with the standard Cholesky lower triangular factor given by  .

For ill-conditioned matrices, it can help to use a pivoting strategy to

prevent the entries of

.

For ill-conditioned matrices, it can help to use a pivoting strategy to

prevent the entries of  and

and  from growing too large, and also

ensure

from growing too large, and also

ensure  , where

, where  are

the diagonal entries of

are

the diagonal entries of  . The final decomposition is given by